PractiTest Updates

March 2022 Updates

Here are some updates from the past month

Product

-

Use Batch Edit to modify Test Type

From users request to a feature - Editing the test type is now available when batch editing tests, adding more flexibility and customizability to your process. -

Onboarding new users just became easier - demo data available for new projects in new accounts by default

From now on, when creating your very first project in PractiTest, Demo data will be automatically added to it to help you experience PractiTest value faster and better.

Coming up

-

PractiTest Live Training

Join our customer success team and ask everything you want to know. April 12th, 10:00 EST / 16:00 CET Sign up here

Can’t Make it?

Join us on April 19th, 14:00 EST/ 20:00 CET Sign up here

-

How to succeed as a Tester in a DevOps environment - a guest webinar with Huib Schoots

April 27th, 10:00 EST / 16:00 CET. Sign up here

PractiTest and Beyond

- Top 20 software testing leaders to follow on twitter

Check out this new article, listing our industry’s top thought leaders to follow, Read it now

February 2022 Updates

Here are some updates from the past month

Product

-

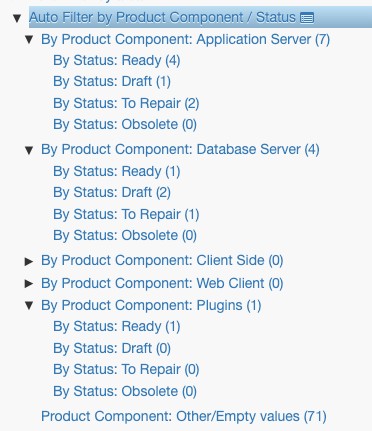

Testing Data Visibility and Organization - Multilevel Auto Filters

Taking test data organization to the next level! With Multilevel Auto Filters you can now create a child Auto Filter, for each of the sub-filters of the parent Auto Filter. Read more about it here

-

Usability and Productivity - Rename Linked List values

We keep listening to our customer’s requests and adjusting our product to better fit your needs; we added the option to rename your linked list values via the settings window. -

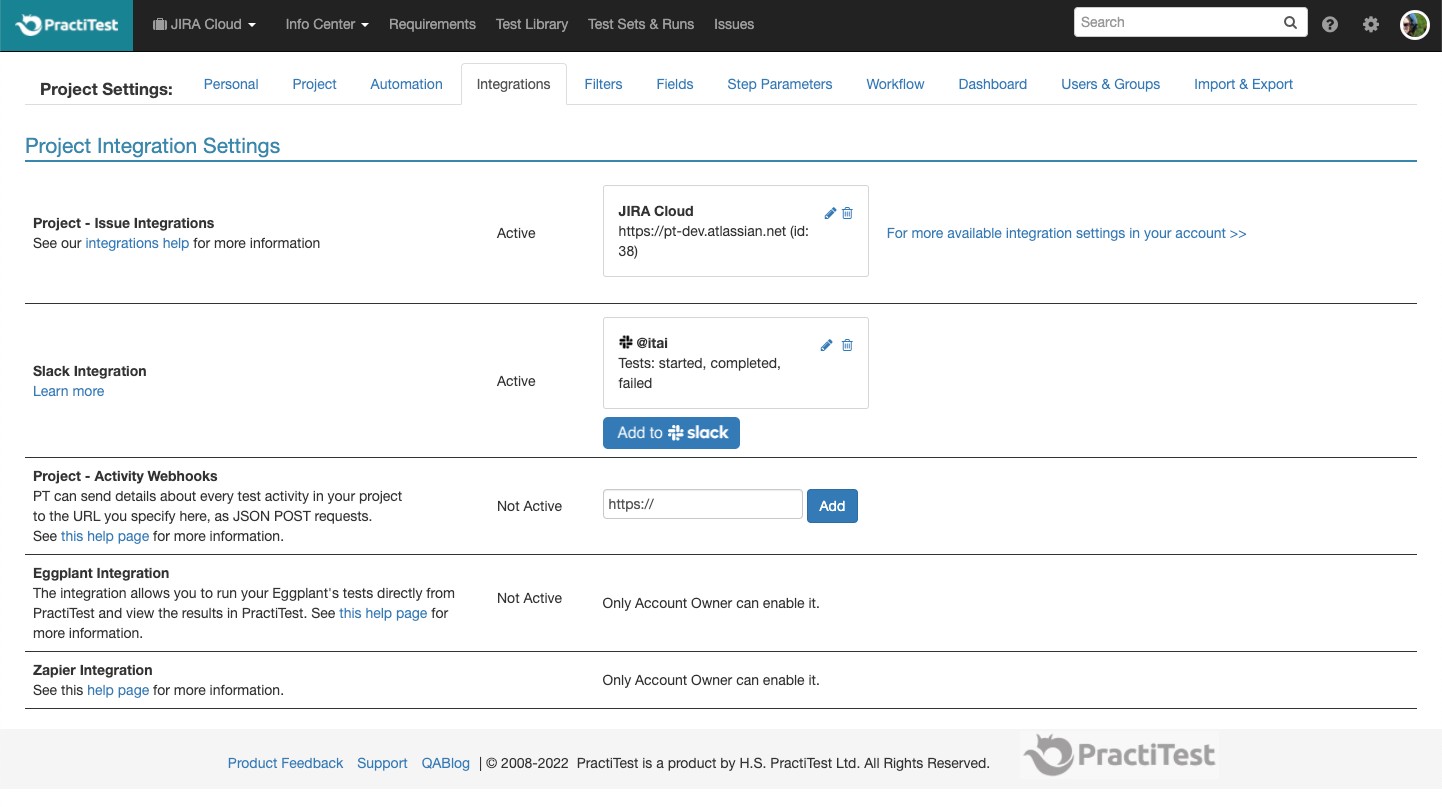

Integrations - Integration settings just became easier

We are introducing an easier way to manage your integrations - you can see it in the integrations settings windows of your projects and account.

Coming up

-

PractiTest Live Training

Join our customer success team and ask everything you want to know. March 8th, 11:00 EDT / 17:00 CET Sign up here

Located in APAC?

Join us on March 22nd, 14:00 EST/ 11:00 PST Sign up here

-

Increasing Productivity by Uncovering Costs of Delay - a guest webinar with Jutta Eckstein

March 9th, 10:00 EST/ 16:00 CET. Sign up here

January 2022 Updates

Here are some updates from the past month

Product

-

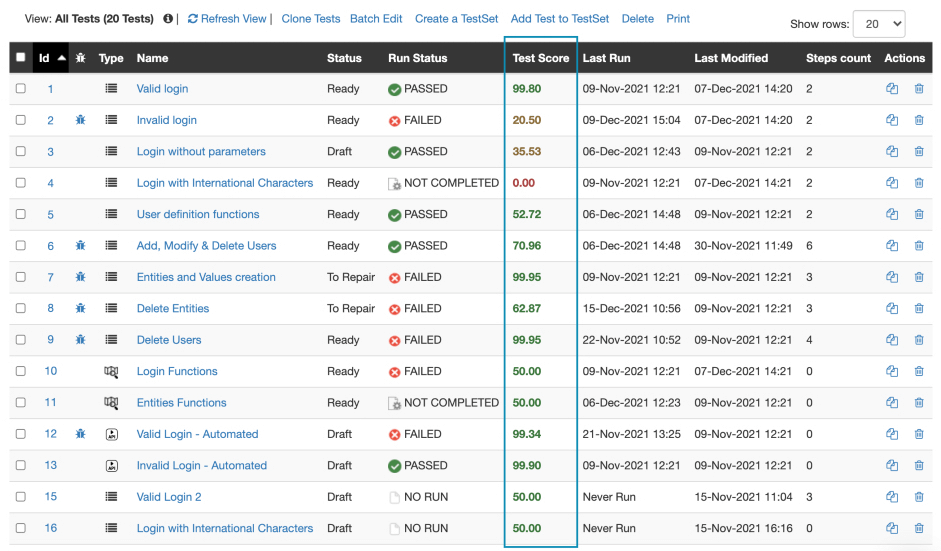

AI-Based Test Management - Introducing PractiTest’s New Test Score Functionality

The new Test Score functionality is based on the analysis of the actual testing in your projects, according to different parameters.

With the help of the Test Score, you can easily understand the value of each test is providing to your project and make wiser decisions including choosing and prioritizing the best tests to run out of a specific group, listing the tests that should be retired from your execution schedule and more. Read more about it here

-

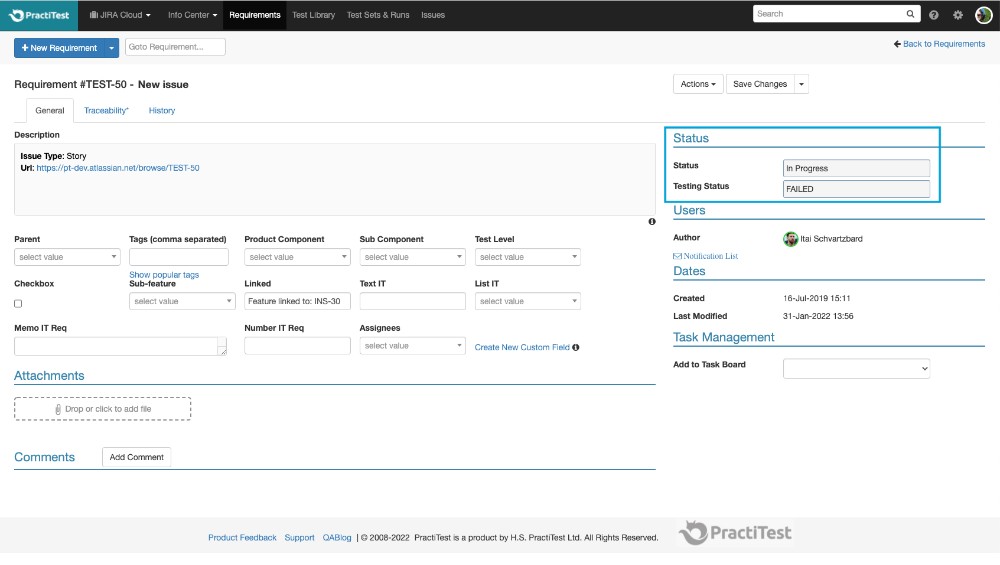

Jira Integration - Adding Jira status field to PractiTest requirement

Inside a PractiTest requirement, you will now find the testing status of the tests covering this requirement, and the Jira status of the Jira issue linked to this requirement. This gives you a better understanding of the status of your tickets and helps you streamline your process.

-

Automation - API Additional GET Instance parameters

When creating a GET Instance request in PractiTest API, you can now filter according to test display ID and not only according to system display ID. This will make your work easier and smoother. Check out all the API requests options here -

Usability and productivity - attachments limitation size increased to 50MB

From now on you can attach files as big as 50MB to any of your entities.

Coming up

-

PractiTest Live Training

Join our customer success team and ask everything you want to know. February 8th, 10:00 EDT / 16:00 CET Sign up here

Located in APAC?

Join us on February 22nd, 17:00 AEDT/ 14:00 AWST (Australia standard times) Sign up here

-

PractiTest’s test automation management capabilities - webinar by Joel Montvelisky

Join PractiTest’s chief solution architect as he goes over PractiTest’s xBot test automation framework and additional test automation management capabilities.

February 10th, 2022, 10:00 EDT / 16:00 CET Sign up here

-

How to bring accessibility into your teams - Guest webinar by Laveena Ramchandani

Learn how to make accessibility testing built-in our teams.

February 16th, 2022, 10:30 EDT / 16:30 CET Sign up here

December 2021 Updates

Here are some updates from the past month

Product

-

So, what did we have in 2021?

Putting our users at the front, these are the main PractiTest areas we focused on, to help users release better, faster and with confidence:

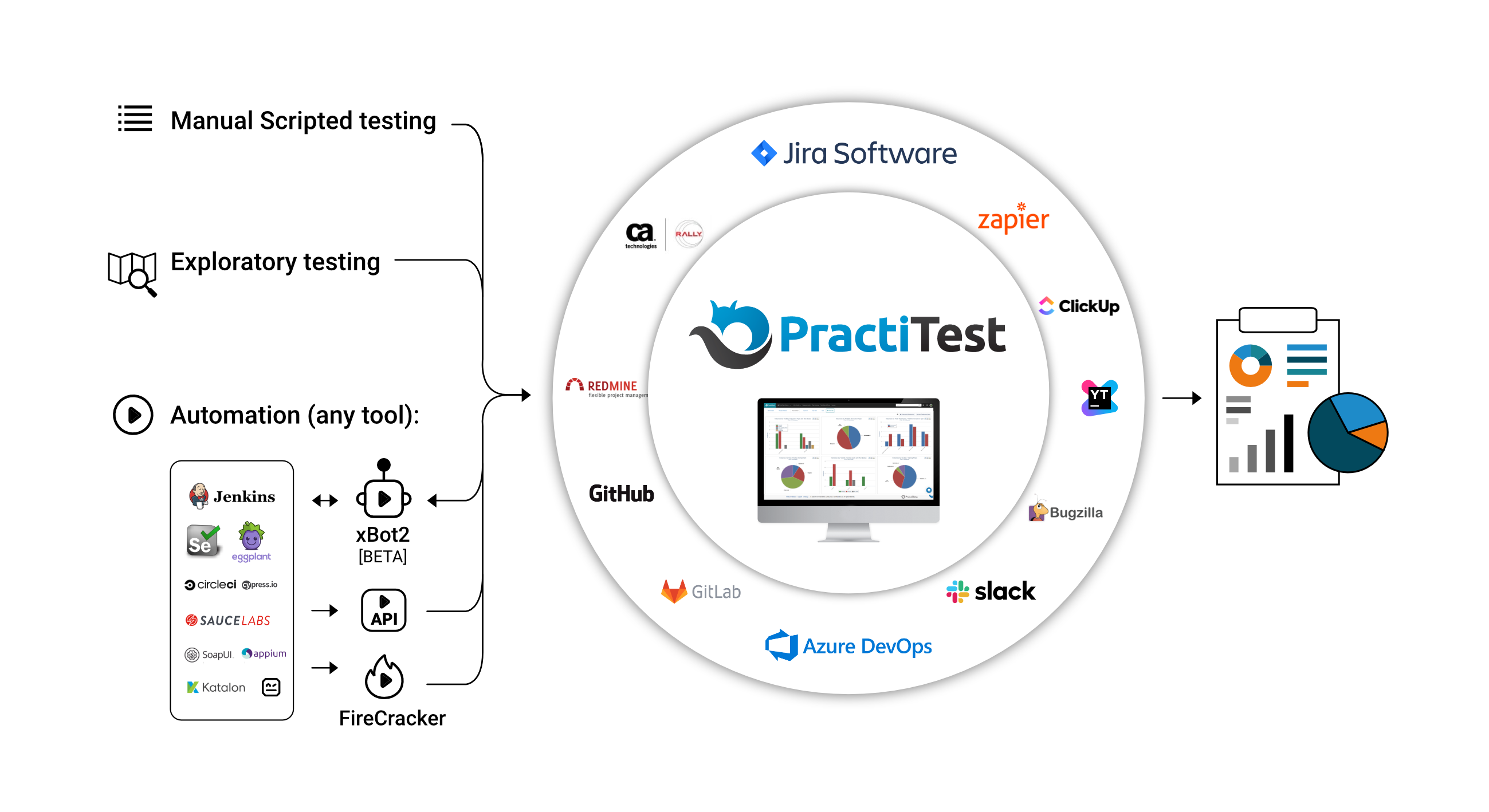

- Automation - We are making PractiTest the most advanced test management solution by constantly improving the test automation integrations with multiple unique solutions.

- Integrations - Use PractiTest as a hub for all your QA information with more integrations added and with advanced improvements to the 2 way Jira integration.

- Visualization - PractiTest new visualization features make your data valuable and helps you make the right decisions.

- Functionality - Sometimes the small things make the difference. With some day-to-day work features improvements, PractiTest can now save you even more time and money.

You can read our 2021 yearly summary here

But we are not done…

2022 just started and we are already releasing another integration improvement feature: -

Jira Integration improvement - Report a Linked issue field from a PractiTest run

When reporting an issue directly from a PractiTest run, you will now have the option to choose a linked issue and to choose the relationship between the 2 issues - just like in Jira. This is available only if the Jira project you are reporting to and the issue type you chose contain this field in Jira.

Coming up

-

PractiTest Live Training

Join our customer success team and ask everything you want to know. JANUARY 11TH, 14:00 EST / 20:00 CET Sign up here

-

Who’s Ready for Post-Modern Testing? Guest Webinar by Alan Page

The A/B Testing podcast star and the world-wide keynote speaker Alan Page will be our guest webinar. JANUARY 19TH, 2022, 10:00 EDT / 16:00 CET Sign up here

PractiTest and Beyond

- 10 Testing events in 2022 that you don’t want to miss

We gathered all the fresh and leading events to attend so you can stay up to date with everything that is going on in the industry. Check it out

November 2021 Updates

Here are some updates from the past month

Product

- ClickUp integration

We keep on adding new integrations to our ecosystem and this time we are introducing ClickUp integration.

From now on you can integrate ClickUp with PractiTest to report issues from PractiTest runs to ClickUp tasks and maintain overall testing visibility. Read more about it here

Coming up

-

10 Ideas to help you correctly evaluate 2021 and effectively plan 2022

Join Joel Montvelisky on a webinar that will help you conclude this year and prepare for the next one. December 8th, 10:00 EDT / 16:00 CET, Sign up now

-

State of Testing™

For the last 10 years we have conducted the “State of Testing” survey to track our industry current and future trends. We invite you to take part and influence this year’s results. Take the survey here

PractiTest and Beyond

- How to Achieve Test Traceability For Compliance Requirements

Find out all you need to know about test traceability for regulatory requirements, such as the FDA Validation Guidelines and other software safety standards and guidelines in this article

October 2021 Updates

Here are some updates from the past month

Product

- Focusing on Automation

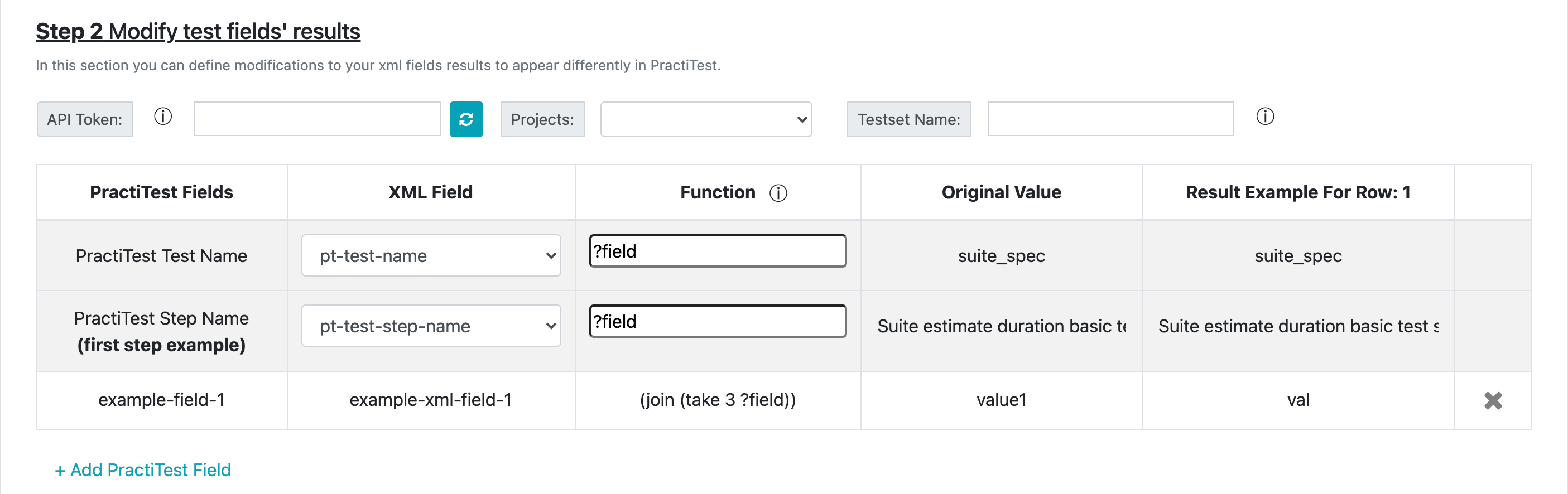

- More parameters control in FireCracker

From now on, you can also define parameters for tests and step names as they will appear in PractiTest, when defining field modification based on your surefile XML’s parsed results.

Read more about it here

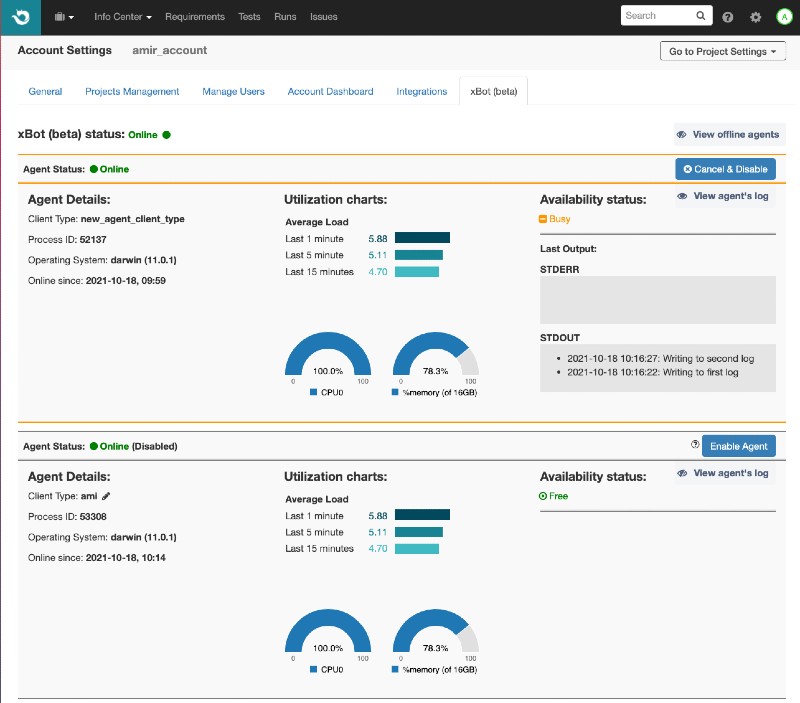

- xBot agents view - for better admin control

To have better control of the agents running your automated tests, we added an agents view in the account admin page. Here you can see the active and non-active agents, enable or disable them, get running parameters, edit client id and more!

Learn more about it here

-

Our clients ask - We listen

- Create Dashboard graphs for Instances and Runs based on text, number, date and User custom fields

When creating a dashboard item based on Instance or Run entity, you are now able to choose more fields as filters for your graph

- Linked issues on instance grid to show ALL linked issues and not from last run only

Unlike before,From now on you will be able to see ALL linked issues of each instance in the test set right in front of you! Without the limit to the last run only.

Coming up

-

OnlineTestConf

Last chance to join the upcoming Fall OnlineTestConf 3-4 November Sign up now

-

State of Testing™

Take part in the longest lasting survey in the industry! More details to come.

Check out the State of Testing™ page

-

Metatesting: The secret questions you need to ask yourself to be a world-class tester

Join our upcoming webinar with Paul Merill, November 17th 10:30 EDT / 16:30 CET, Sign up now

September 2021 Updates

Here are some updates from the past month

Product

- More focus on automation API

From now on you can create and update any automation field through API calls. To see all the API options, go here

Coming up

- Automation: Embrace it, don’t be scared - webinar by Ajay Balamurugadas

Learn how to approach automation to grow your career around it. October 20th 10:00 EDT / 16:00 CET Sign up now

Trending on the web

- A tool review by Alan Page

We are honoured that a thought leader like Alan Page wrote “I feel like PractiTest provides a great way to be the right tool for a lot of teams – and that’s a huge achievement.”

PractiTest and Beyond

- Learn about Functional Testing

All you need to know about functional testing, now in a new in this new guide

August 2021 Updates

Here are some updates from the past month

Product

-

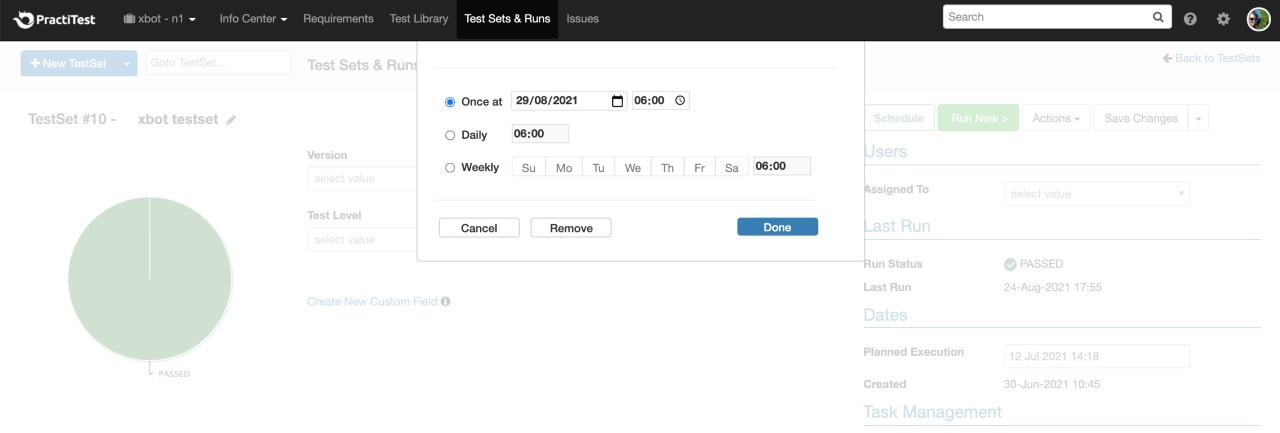

Automation Test Management #1 - xBot improvements

PractiTest automation framework, the xBot, is constantly improving. From now on, you can schedule your automation runs from within PractiTest to run on a daily, weekly or monthly basis or on a specific date. If things change and you want to delete a pending run or kill a running test - you can do so from your xBot dashboard.

-

Automation Test Management #2 - CircleCI integration via FireCracker

CircleCI and PractiTest integration just got better! CircleCI has a new PractiTest FireCracker Orb to integrate all your CircleCI results into PractiTest automatically. Click here for more information and examples.

Coming up

- Growing an Experiment-driven Quality Culture

Sign up to this guest Webinar by Elisabeth Hocke here

PractiTest and Beyond

- Learn about Exploratory Testing

and how to apply it using PractiTest in this new guide

July 2021 Updates

Here are some updates from the past month

Product

-

Multiple Integrations per account

Want to integrate PractiTest with 2 different Jira accounts? With AzureDevOps and JIRA Server? Have a team that works with a project management tool and a bugtracker? This update is for you. From now on, you can integrate your PractiTest account with 2 different issue management tools allowing you to maintain seamless control of your entire process -

Auto-sync Jira filters

SyncingJira filter to PractiTest is no news, but now you can also keep the sync running and update PractiTest with every change you make to issues in the synced filter! Read more about it here -

Project history view

From now on, PractiTest will combine your actions in the history tab to have all actions made by the same user in the same day on the same entity - in one record. This will help you have a clearer view of your actions log history. -

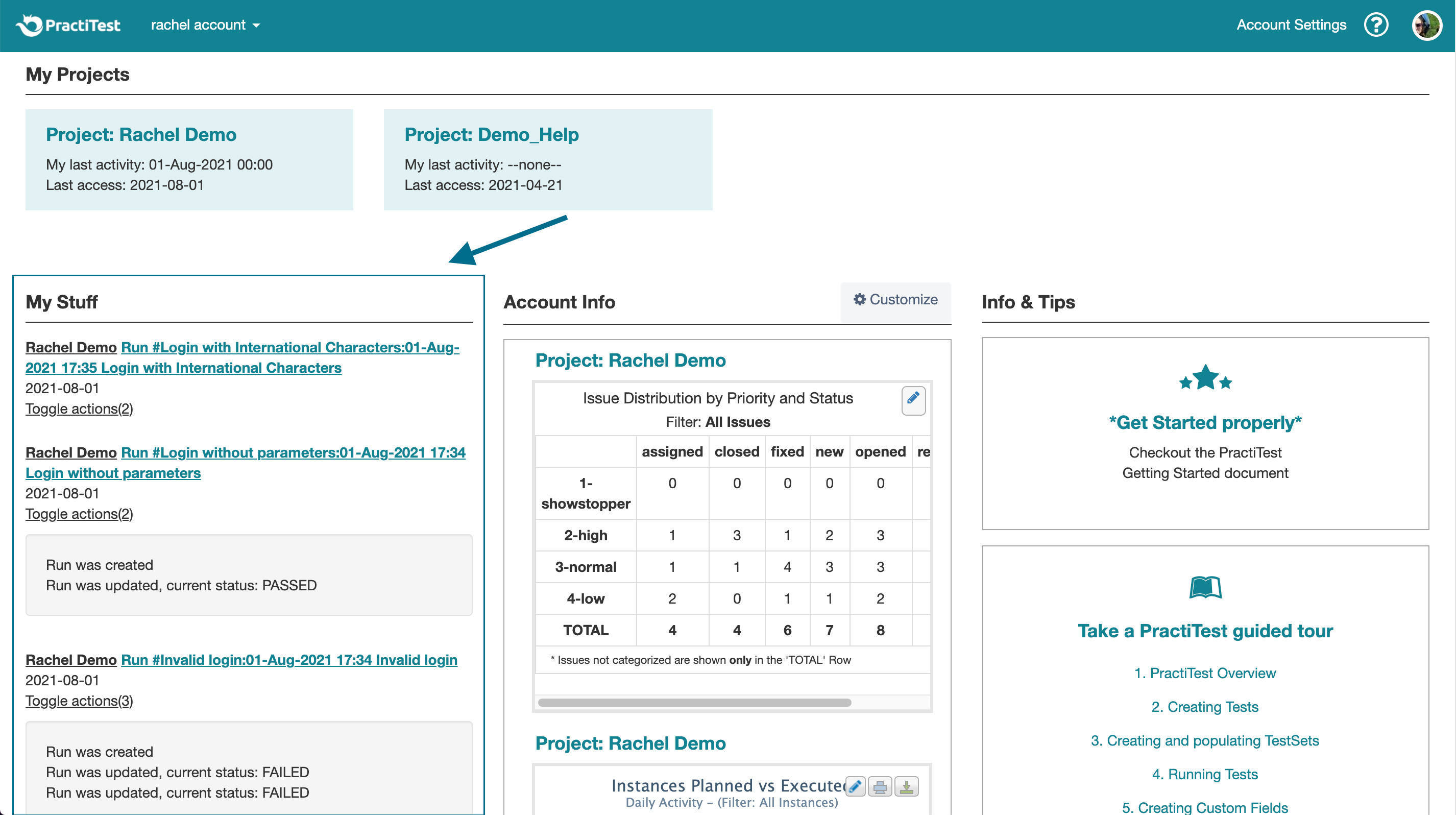

More stuff in My Stuff

My stuff section in the account landing page will now also include your Test Sets runs logs. This gives you a quick overview of relevant actions you made recently and also easy access directly to them

PractiTest and Beyond

- Missed our Guest Webinar by Rob Meaney “Testing is Not the Goal”?

It’s not too late! Watch it here