PractiTest Updates

June 2021 Updates

Here are some updates from the past month

Product

- FireCracker improvements for better automation results configuration

The new FireCracker version, lets you choose whether to include xml test-cases in separate tests in PractiTest or to have them as multiple steps in one PractiTest test. You can also create more than one TestSet for your test-case runs’ results based on your xml data. Read more about it here

Coming up

- Testing is Not the Goal - Guest Webinar by Rob Meaney

Learn from a Testability expert how to deal with the new testing reality. Don’t miss out, Wednesday, July 21st,10:00 EDT/ 16:00 CEST Sign up here

PractiTest and Beyond

- Learn about sanity testing in software development and maintenance projects.

Read our latest QA Learning resource

May 2021 Updates

Here are some updates from the past month

Product

- API Improvements for more accurate results

From now on you can create or update steps in PractiTest using the API. Read more about it here

Coming up

-

PT labs: Create your Test Management Center of Excellence, By Joel Montvelisky

June 16th, 10:00 EDT / 16:00 CET. Sign up here

-

Mobile Test Management Done Right, webinar by Daniel Knott

June 23rd 10:00 EDT / 16:00 CET. Sign up here

PractiTest is on the Map

- Learn how to turn your personal weaknesses into your testing strength

Come listen to Joel Montvelisky speaking on June 9th at the RTC

PractiTest and Beyond

- A-Z Guide on Sanity Testing

Read our latest resource by Randy Rice

T

April 2021 Updates

Here are some updates from the past month

Product

- Customize the default view on all modules

From now on you can customize columns on the main (All) grid view of Requirements, Test Library, Test Sets and Issues giving you more flexibility and a better view. - Turn List fields to Multilist fields

You can change custom list fields to multi list fields in your Fields Settings window

Coming up

- The 10th OnlineTestConf - The very first QA conference in the world is celebrating its 10th edition

Listen to the best speakers - for free! May 11-12th Sign up here

Want to taste first? Join Rob Lambert for a warm-up talk about ‘How to create a test strategy that aligns to the business’, May 5th Sign up here

- Testing a data science model webinar by Laveena Ramchandani

May 26th Sign up here

PractiTest and Beyond

- Requirement Matrix Traceability

Read our latest resource by Randy Rice

March 2021 Updates

Here are some updates from the past month

Product

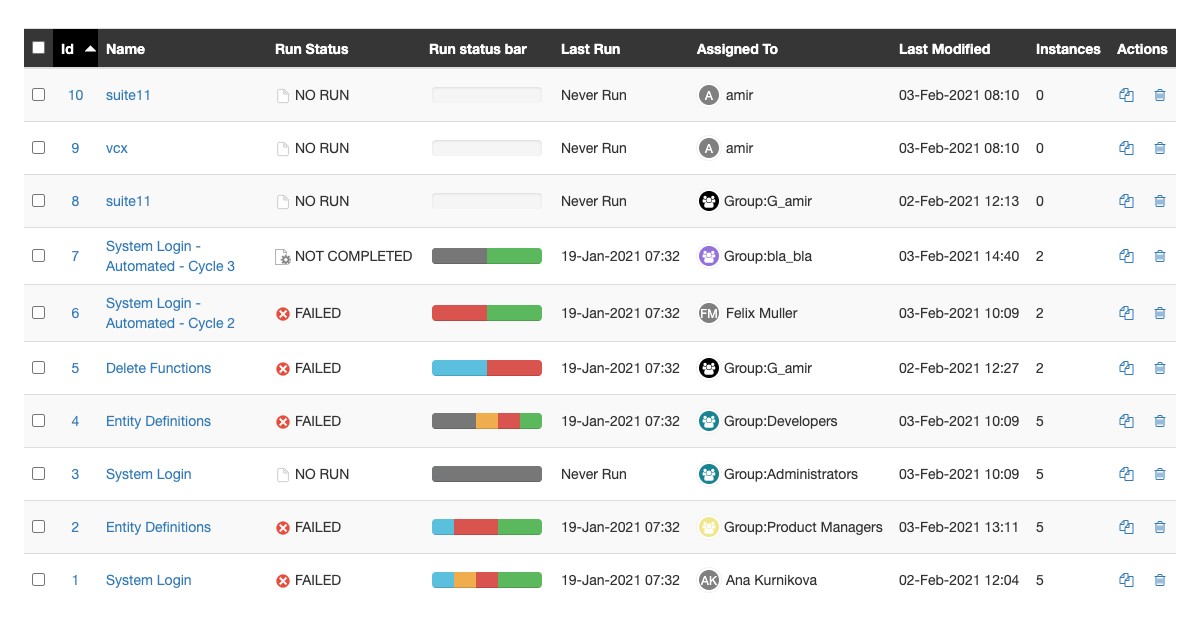

- Assign entities to groups

From now on, once enabled in project settings, you can assign an entity not only to one user but to a group of users (as defined under users & groups in project settings)

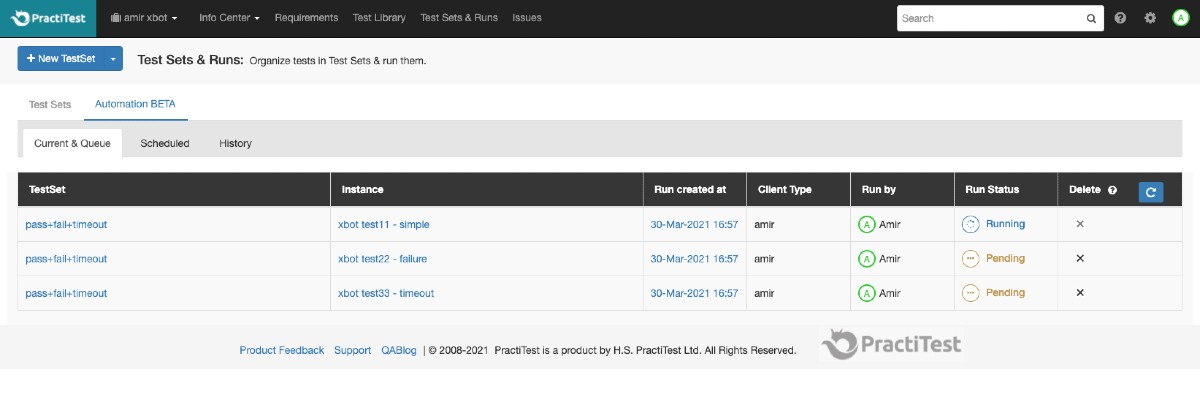

- Taking automation to the next level - xBot BETA improvements

You can now find under the TestSets & Runs module all the information you need about your xBot tests: the current running tests, the queue, the future scheduled tests and the history. Learn more about xBot options here

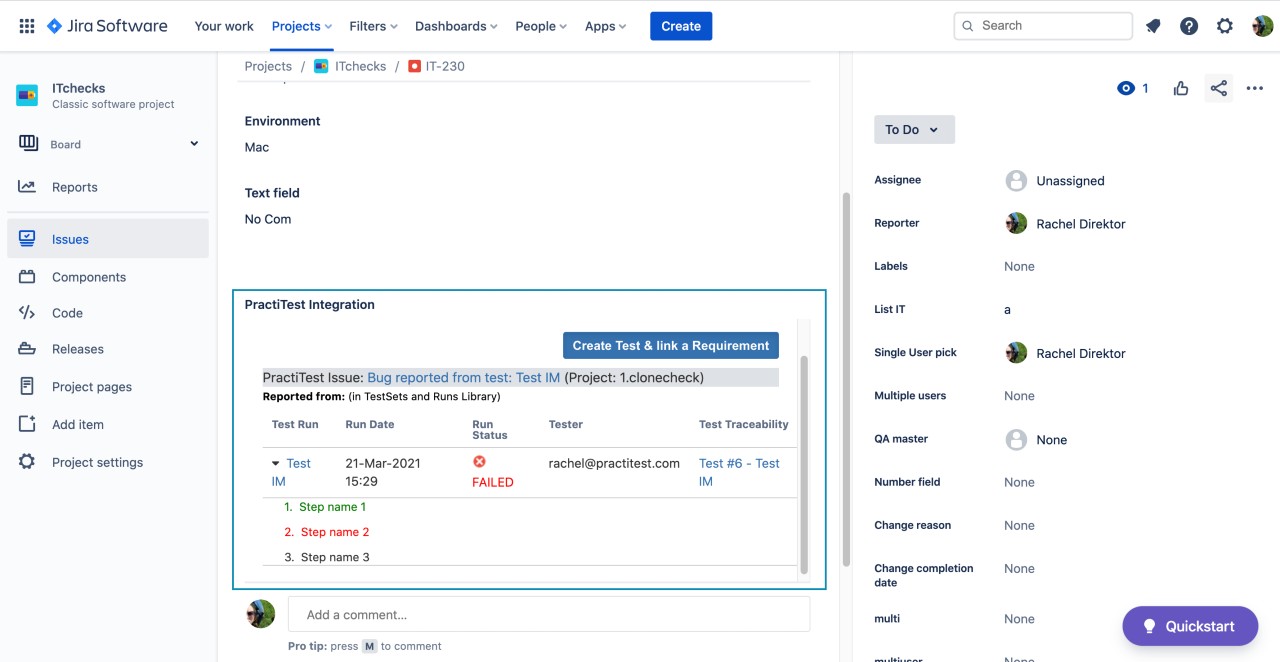

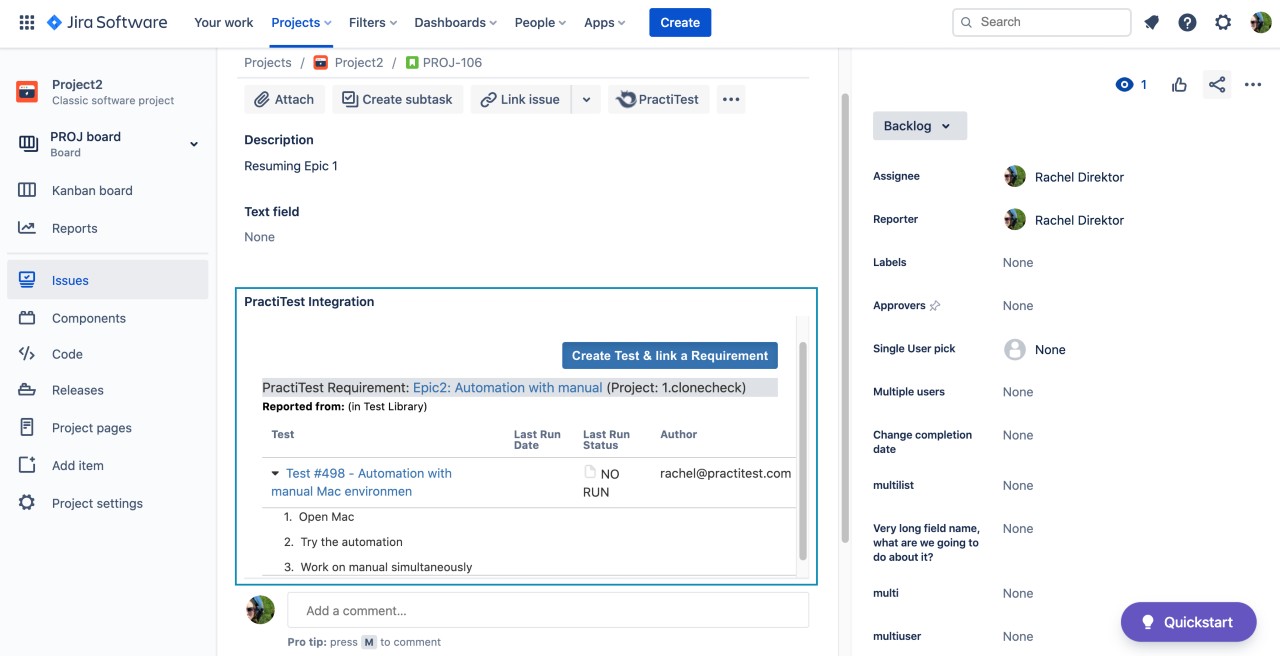

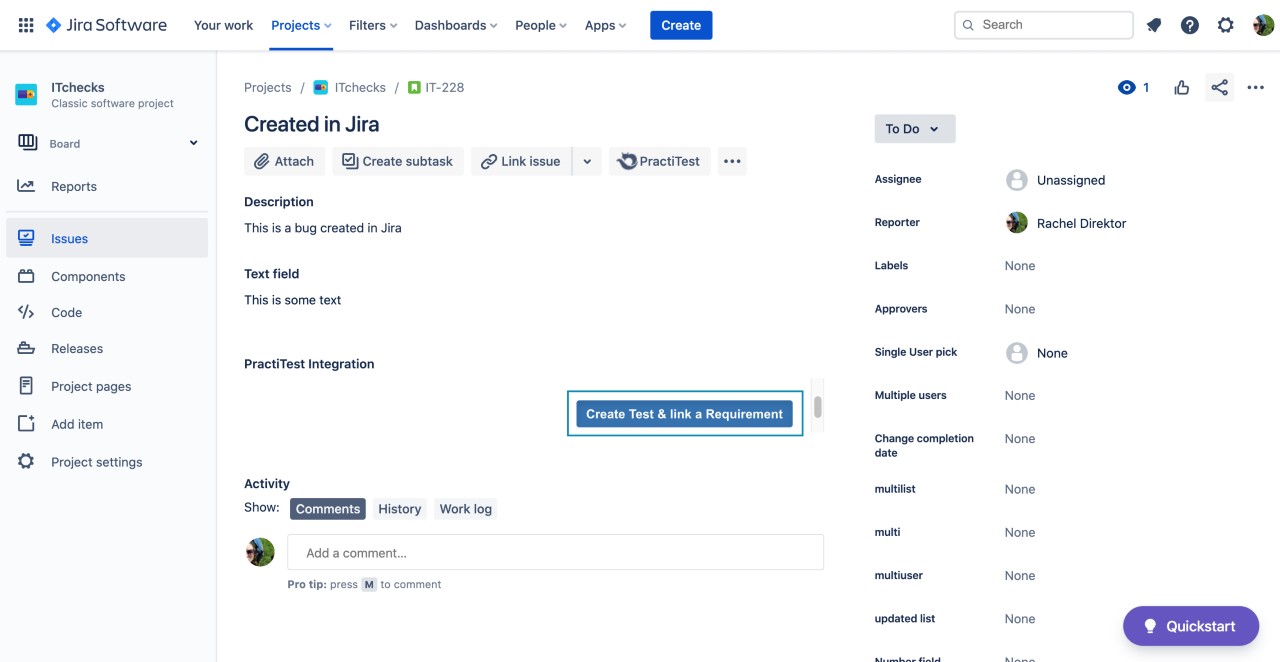

- New Jira Panels - Get more from your PractiTest-Jira integration

With Atlassian’s updated issue view, you can now see all your relevant PractiTest information inside Jira in a new panel (Serve and DC users need to update their PractiTest plugin in Jira) and easily link issues from Jira to PractiTest requirements:- If your issue originated in PractiTest (or is linked to a PractiTest issue) you will have a clear view and links to all the run’s data.

- If your issue is linked to a PractiTest requirement, you will see all the data about the tests covering this user-story.

- If you want to link a Jira issue to a PractiTest requirement and create a test to cover it, you can do it with a press of a button.

- If your issue originated in PractiTest (or is linked to a PractiTest issue) you will have a clear view and links to all the run’s data.

Coming up

-

{Guest Webinar} Apply the DEER heuristic to make your Exploratory Testing way more visible

Join Anne-Marie Charrett on April 7th, Sign up here

-

{PT labs} Catch up on all the latest updates to PractiTest

Join Joel Montvelisky as he goes over some of the new PractiTest improvements that can boost your process and product. Register here

-

{Experts Panel Webinar} What not to miss in the 2021 State of Testing™ report

Join Joel Montvelisky and Lalitkumar Bhamaretalk to fellow testers about the new report’s findings

February 2021 Updates

Here are some updates from the past month

Product

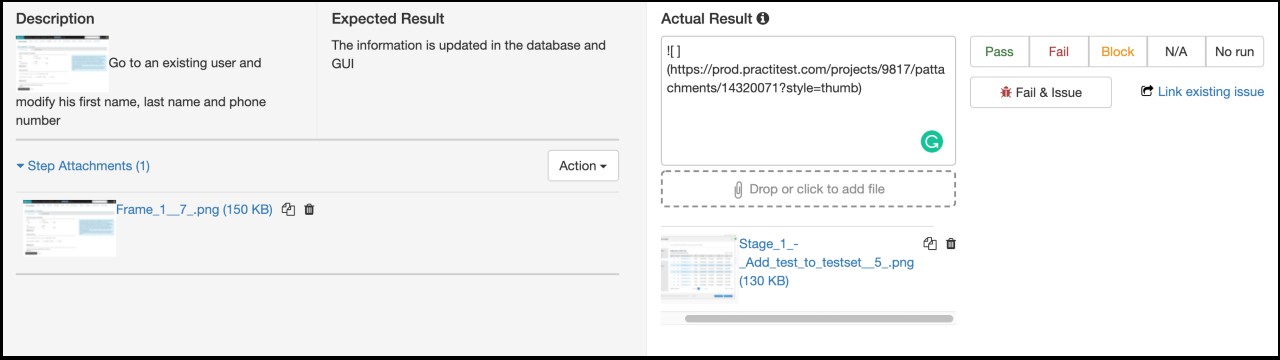

- Intuitively and easily attach files and images

From now on, you can attach files using one of the options below:- Press the ‘Drop or click’ button

- Drag & drop into the button zone

- Drag & drop into description fields

- Paste link (ctrl+v/CMD+v) from clipboard into description field

- Copy attachment to clipboard and paste it in any markdown field in PractiTest.

- Jira integration improvements - Create an issue in PractiTest and choose whether to sync it to a Jira ticket or not

You asked - We listened! From now on, you can create an internal PractiTest issue (that is not synced to Jira) even if your project is integrated with Jira. Later, you can sync it to Jira from within the issue.

Coming up

-

Characteristics of a Modern Test Process: Guest Webinar By Jan-Jaap Cannegieter

Learn what Modern Test Process Characteristics mean for us as testers. Thursday, March 18th, 16:00 CET / 10:00 EST Sign up here

-

Agile software development and QA - Webinar in Japanese

We are happy to invite you to a webinar by our Partner CEC ltd. and Fujitsu Software Technologies. March 2nd 15:00 JST. Register here

PractiTest and Beyond

- Five Test Automation Metrics to Help You Improve Your CI/CD Process

Read our latest resource about Test Automation Metrics by Ali Hill Read here

January 2021 Updates

Here are some updates from the past month

Product

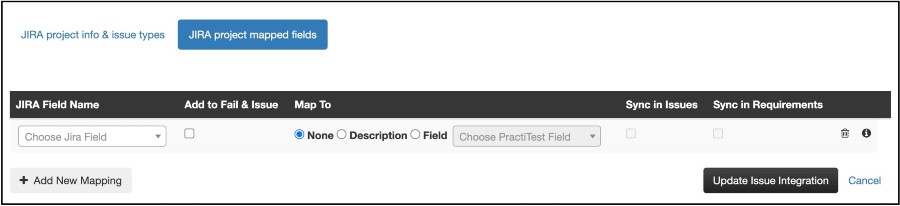

- Best test management for Jira - edit JIRA fields directly from PractiTest

After adding the ability to map fields from Jira to PractiTest, you can now edit Jira fields’ values from PractiTest. This is one more step in making PractiTest a hub for your entire information allowing you to generate dashboards and reports based on your ENTIRE data. - Demo data projects

Want to play around PractiTest but don’t want to mess your statistics? Have a new tester on board and you want him to practice before he starts the actual work? You can now create a new ‘demo project’ full of demo data for you to learn and explore the software. (Be sure to review our training course and certification program as well) -

Importing and exporting traceability

When importing or exporting entities, the linked entities will be imported as well to keep full traceability throughout your project.Import/export Linked Traceability Requirements Tests and Issues Tests Requirements Issues Requirements and Issues

Coming up

-

Webinar - SEBTE: A Simple Effective Experience-Based Test Estimation, By Robert Sabourin

Learn how SEBTE can help your testing work, starting tomorrow. Sign up now

-

PT labs webinar series- xBot and Automation in PractiTest

Come here about the extensive automation capabilities of PractiTest. Register here

December 2020 Updates

Here are some updates from the past month

Product

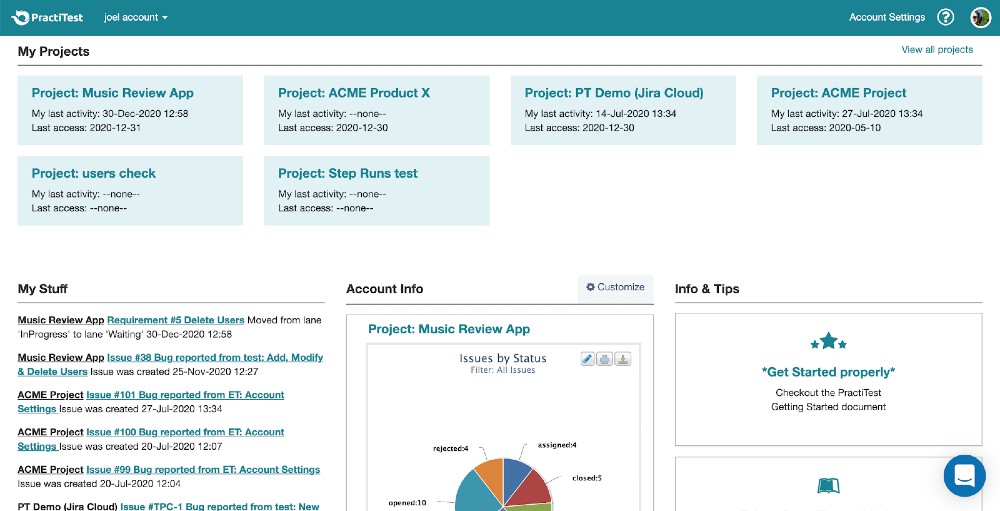

- Account overview page - cross project information, faster.

This new landing page, centralizes all your account’s links and recents activities so you can view your responsibilities, actions and data across all your projects upon login.

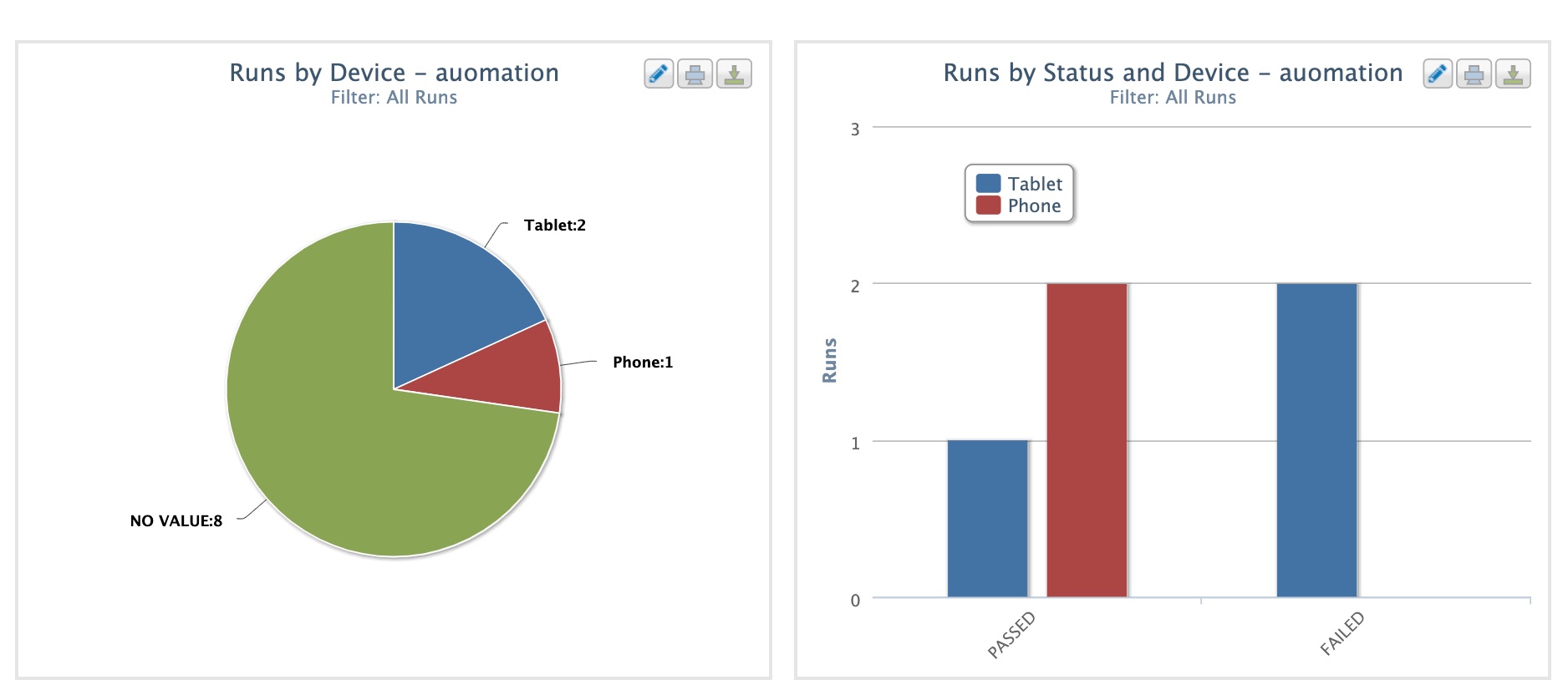

- Run filter for graphs and reports - more visibility to your automation testing status

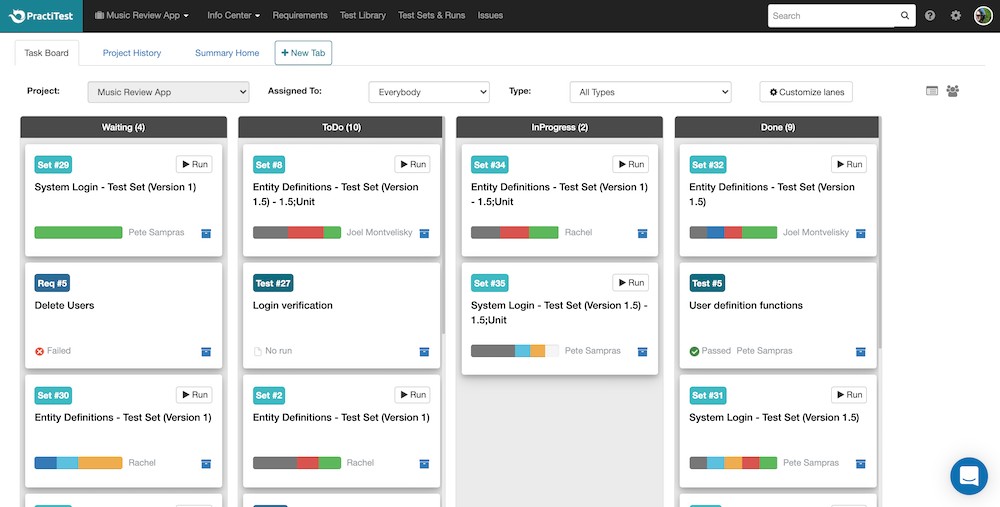

We added to all run-based dashboard items and reports, the option to filter the data using run level fields. - TaskBoard GUI enhancement

Not using PractiTests’ taskboard? Now is the time to start! Manage everyone’s assigned entities - Kanban style.

Coming up

-

How to find your dream career and become successful leaders

practical strategies to find your dream job? Join Raj Subramayer’s webinar on Jan. 6th, 2021 16:00 CET/ 10:00 EST. Sign up now 3 attendees will receive a free copy of Raj’s new book “Skyrocket your career”

-

State of Testing Survey is about to conclude

Take part in the longest running QA and Testing annual report. Answer this quick survey

PractiTest and Beyond

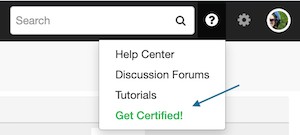

- Master Your PractiTest knowledge

Click the help icon to access our certification program. View the training course and get ready to test your PractiTest skills.

November 2020 Updates

Here are some updates from the past month

Product

- Faster and easier Jira field integration settings

Enjoy improved set up and display of your synchronized Jira fields within PractiTest.

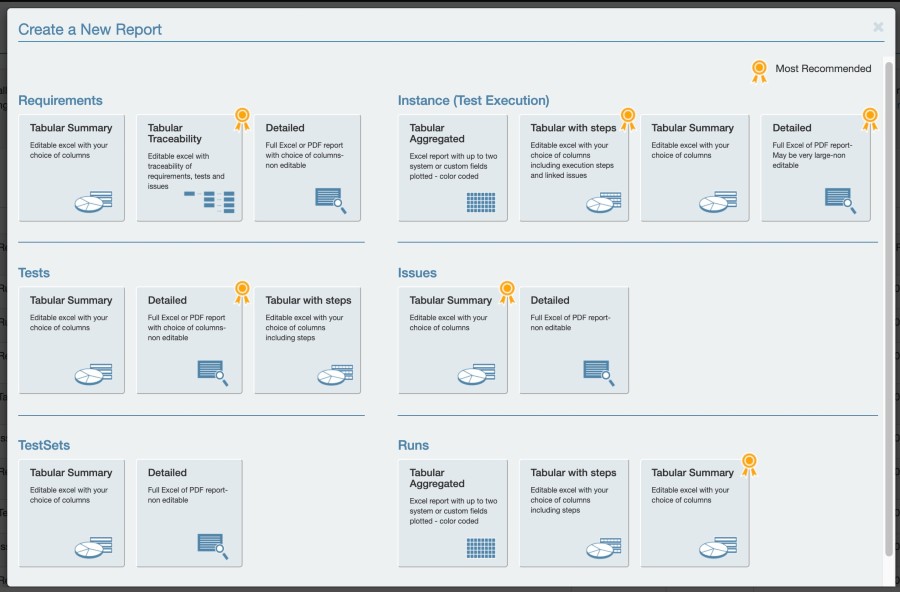

- More Run Level visibility with new Graphs & Reports

We keep adding more functionality to enhance your testing, this time with additional graphs and reports at the level of your Test Runs. You can create Distribution Table, Bar Chart, Pie chart and Execution Progress graphs based on Test Set and filtered by Run Level fields.

We’ve also added a whole new run level reports section - check it out!

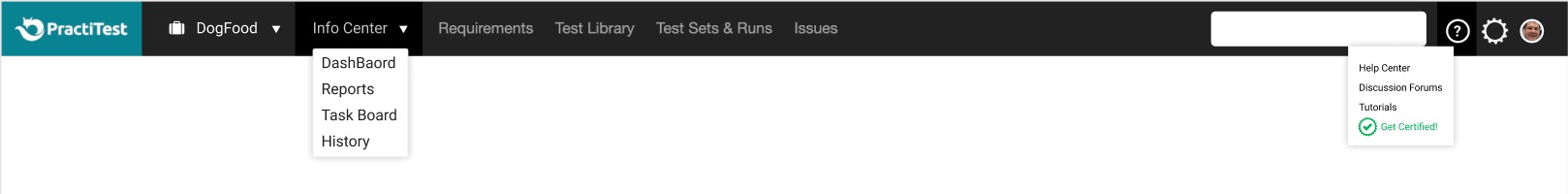

- New navigation bar with exciting new features!

- PractiTest certification

Are you a PractiTest hero? Get acknowledged by taking the PractiTest test and receive you certification.

Don’t feel like a master of PractiTest just yet? Take the online course and become one! - User profile picture

Know who is in charge of what at a glimpse! you can now add an image to your profile that will appear next to your name whenever mentioned in the software!

- PractiTest certification

Coming up

-

The Online TestConf is running - you can still catch the last day!

2 days are behind us - one more day to go. Join the best speakers from around the globe. Sign up now

-

CEC ltd and MSYS will host a PractiTest and Eggplant webinar in Japanese: 「DX時代のテストアプローチ」-加速化するビジネスとテストプロセス変革-

December 10th, 15:00~15:45 JST. Sign up here

-

Peek into Observability from testers lens

Join this webinar by Parveen Khan where she will share her journey of observability to understand how it can support testing. Dec.16th at 16:30 CET / 10:30 EST Sign up here

-

How to find your dream career and become successful leaders

Join this webinar by Raj Subramayer discussing practical strategies to find your dream job, be massively successful in it, and meet your rockstar potential. Jan. 6th, 2021 16:00 CET/ 10:00 EST. Sign up now

October 2020 Updates

Here are some updates from the past month

Product

-

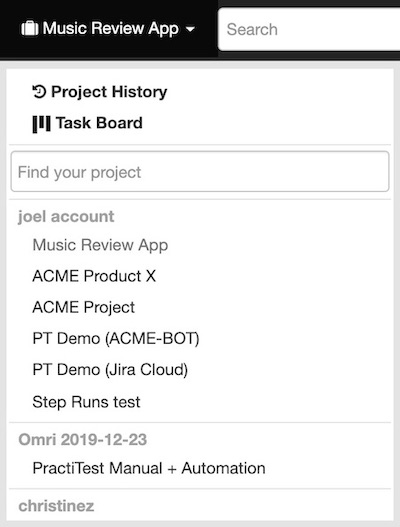

Filtering projects in project dropdown

Working with more than 8 projects? This one's for you. From now on you will be able to search within your project dropdown list to get you to where you want faster and easier.

-

PractiTest internal automation framework, XBot 2.0 beta, will be out within the next few days!

Talk to us if you want to be one of the first ones to try it!

Coming up

-

Stop throwing away 75% of the value generated by your Automated Testing!

a PractiTest Eggplant Joint webinar.

Joel Montvelisky, PractiTest chief solution architect, and Evan Bartlik, Solution Architect at Eggplant will demonstrate how the PractiTest-Eggplant integration can help you cope with the challenges of managing automated and manual testing. Nov 12 - Sign up here

-

Making Manual and Automated Testing Work Together

Joel Montvelisky is hosting Viv Richards, a leading QA consultant on Nov 18th.

-

PractiTest is proud to sponsor two testing events: Agile Testing Days (Nov 10-12) and ASQF Quality Day (Nov 26)

-

OnlineTestConf is coming up! 1-3 December 2020

Check out the program here

Didn’t sign up yet? Do it now!