PractiTest Updates

September 2020 Updates

Here are some updates from the past month

Product

-

GUI Improvements

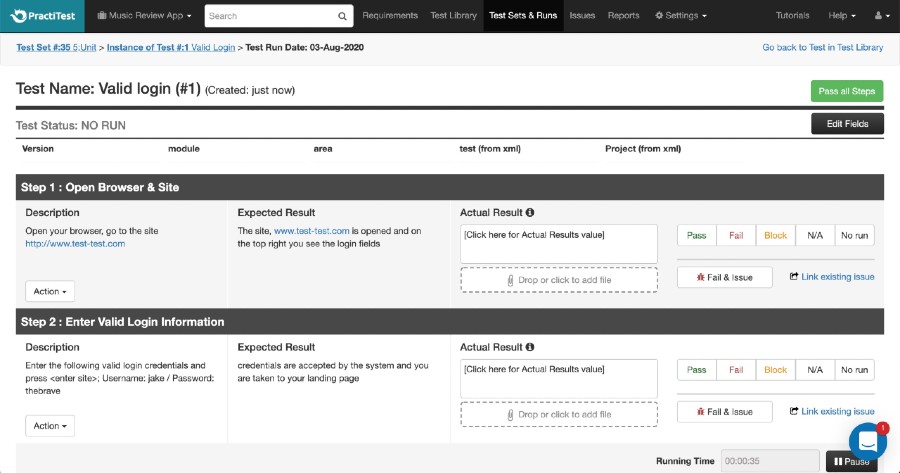

- Run Steps Window

running scripted tests is at the heart of manual testing. This is why we made the running window more user friendly

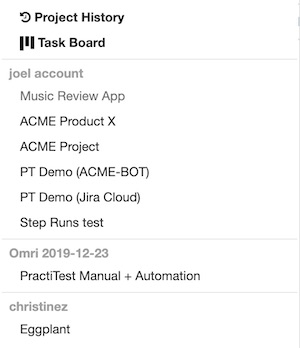

- Project drop down list

working with multiple projects? In multiple accounts? Now you can navigate easier between your projects using the new projects drop down list.

- Run Steps Window

-

Auto-filters Improvement

When creating an auto filter, you will now have the ability to hide the empty values in your filter for less clutter and more productivity. -

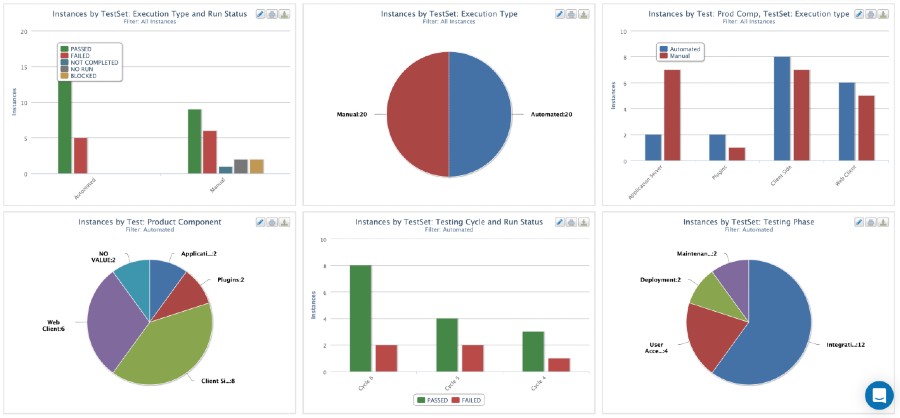

Better visibility with new graphs!

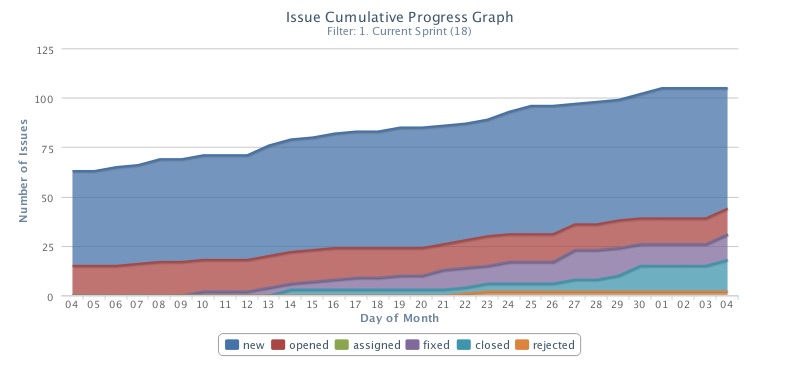

- Issues status progress graph

Follow the status of you issues with this new adjustable graph.

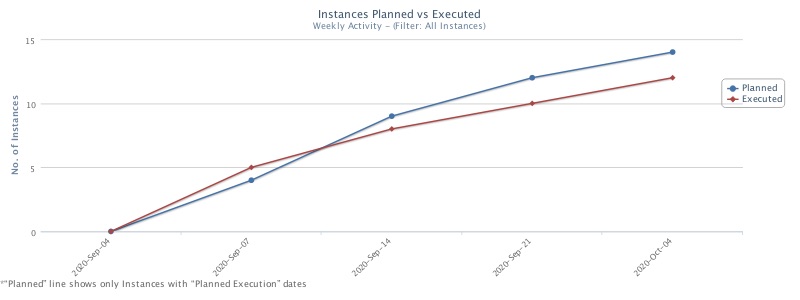

- Planned-vs-Executed graph

Monitor your team’s progress with this new graph that will help you understand the status of your actual testing

- Issues status progress graph

Coming up

-

PractiTest Webinar: Let’s Focus More On Quality Engineering And Less About Testing

Join Joel Montvelisky this Wednesday, October 7th - Sign up here

-

Online Conferences and Events:

TestCon conferences - a workshop about “Test Management when Testing is the Whole Team’s Responsibility”.

STPCon webinar on October 21st a talk about Modern Testing - See here

-

OnlineTestConf is fast approaching!

This December 1st-3rd. Haven’t you signed up yet? Do it now

August 2020 Updates

Here are some updates from the past month

Product

-

The QA revolution is here - don’t stay behind!

Manage your entire process of automation, manual and exploratory testing - all in one place

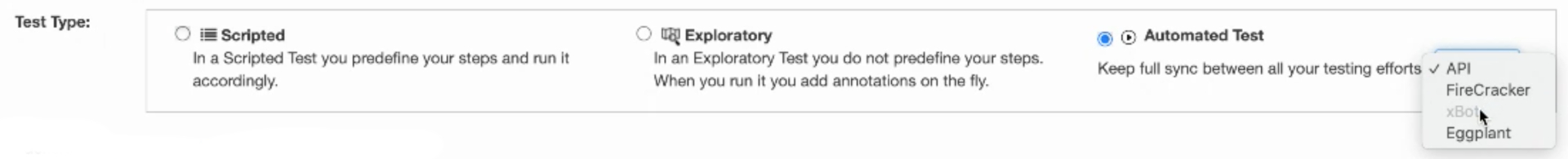

We have added new functionality to improve the management and coordination of your manual and automated testing efforts. We have added additional test types to cover different automation approaches (REST API, FireCracker, xBot and more), additional execution options, and better ways to view and report results.

-

PractiTest JIRA Integration just got much stronger

Based on our clients’ requests, we’re proud to present two major improvements to the JIRA integration:

-

Synchronise additional fields from JIRA to PractiTest, and take advantage of PractiTest advanced Filters and Dashboard for end to end visibility

PractiTest is now allowing users to bring the information from additional fields in Jira when linking Issues and Requirements between the two systems. This means users will have all their important data in PractiTest, allowing them to generate end to end visibility of User Stories, Tests and Issues in PractiTest, with the help of our Advanced Filters and Flexible Dashboard and Reporting options. Read more about it here.

-

Fill out additional Jira fields while creating a ticket in PractiTest

In order to make the reporting issues from PractiTest to Jira simpler and faster, we added the possibility to include more fields when reporting bugs into Jira from your PractiTest runs. For more details, go here.

-

Synchronise additional fields from JIRA to PractiTest, and take advantage of PractiTest advanced Filters and Dashboard for end to end visibility

-

Issue’s age information

From now on, every issue in PractiTest will have a new field displaying its age. This measurement helps organizations to improve their bug management process by providing more visibility into the time it takes to take care of your issues. In addition to the field itself, we have deployed a Defect Age graph on the dashboard to get a better understanding of this metric in your projects.

Coming up

-

Guest webinar with Ben Linders

Ben will be talking about “Enhancing quality and testing in agile teams from theory to practice” on September 9th. 10:00 EDT / 16:00 CEST. Sign up here

-

PractiTest is sponsoring SQiP conference in Japan

Check out our profile and have a chat with our local partners in the Online SQiP Event. Join the live demo on October 10th, 11:00 AM JPT

-

Live Podcast Episode

Testing one on one is going live! Join Joel and Rob for a live session where you can ask them anything. September 16th.

PractiTest for the community:

- A New Resource

on how to Master the new reality of manual and automation test management is now available on our website. Check it out

July 2020 Updates

Here are some updates from the past month

Product

- Test Management - including more of your automated testing!

Within the next few weeks, the entire way you manage your Testing will take a shift up, by including more of your automation in it. PractiTest will include automation items in its GUI and all types of test results (including Unit and CI/CD test results) will translate into coherent actionable dashboards and reports.

- Last Run fields in Dashboard and Reports

After enabling the run level fields you can now use the information from your last run fields in Dashboards and Reports, as well as in many grids within the system. This provides better visibility and control of your results as well as the additional run-level information captured during your testing

- Step Parameters improvements

Step parameterization is one of the features our customers find most efficient - so we made it even better. You can now work with lists of values for your parameters, as well as the option to reuse existing parameters while you are writing your tests and steps. - Add more fields to Jira Create Issue Modal

Report a ticket to Jira and include values for any Jira field you want - required and non-required. This change will make updating Jira even smoother. Contact us for more information

Coming up

-

Cross Browser Test Management Using LambdaTest & PractiTest

Antony Lipman will be a guest in this Lambda Test webinar this Tuesday, 4th AUG 2020, 10:00 AM PST Sign up here

-

Live Podcast Episode

Testing one on one is going live! Join Joel and Rob for a live session where you can ask them anything. Stay tuned for more details

-

Joel Montvelisky on SwissTestingDays

Mindfulness at a testing conference!? Joel will introduce a number of techniques to help teams provide excellent testing results faster and in more focused operations. Read more here

PractiTest is on the Map!

- Testing in DevOps? a new blog post!

We recently invited Ali Hill to write a guest blog post in Joel’s QAblog. His article on The role of testing in DevOps blew our mind! We can’t wait to cooperate with him again!

June 2020 Updates

Here are some updates from the past month

Product

- Personal API Tokens (PAT)

With a personal API token, you can give each user a unique access ONLY to the areas this specific user is permitted on. PAT is enabled in most modules and has the option to link a user to his PAT actions. This is yet another security improvement that ensures your data is protected and that your process is running correctly. Read more about it here

-

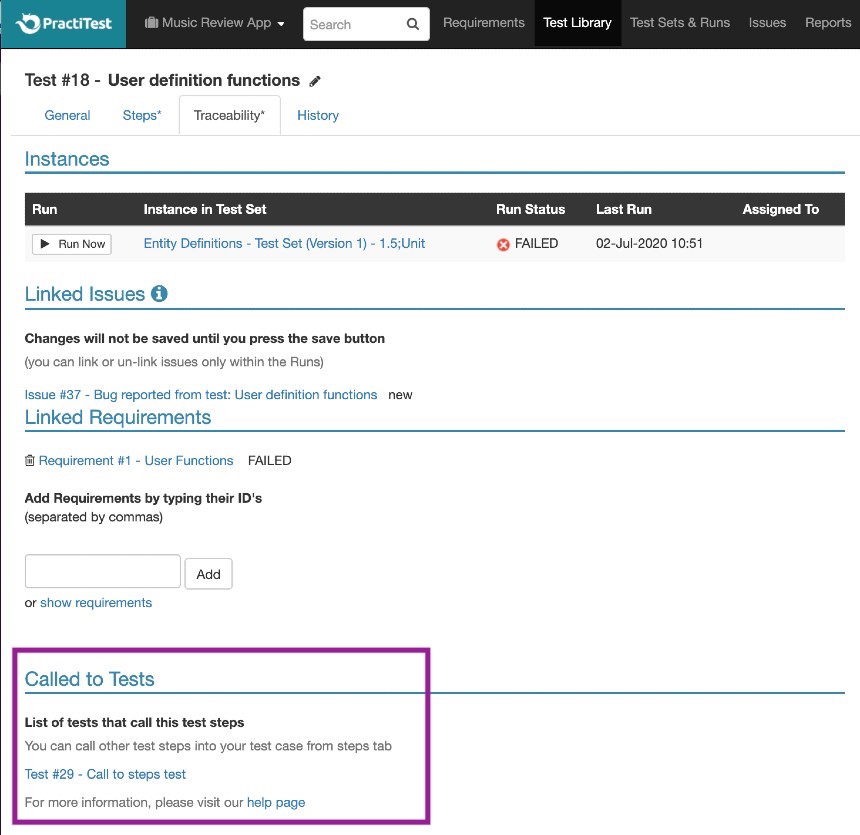

Extended traceability for ‘called to test’ tests

To ensure full QA coverage, you can now see for any test, the tests calling it, with a link to those tests.

- Minor dashboard improvements

You can now define the exact scope of time you wish to view in your dashboard item

Coming up

-

PractiTest Labs

If you are working in the healthcare industry, you don’t want to miss this one. Join Edith and Antony on July 15th 10:30 AM EDT/ 4:30 PM CEST

-

Ausies, Listen Up!

The Australian tester association is hosting Joel Montvelisky on July 16th, at 17:30 (Australia time) to talk about the new reality of (Manual +Automation) test management. Don’t miss out! Sign up here

-

SAEC Days conference is hosting Joel Montvelisky

Joel will have 2 sessions, on the 22nd and the 23rd of July.

-

Managing Security Tests and Creating a Secure Culture

PractiTest is hosting Santosh Tuppad, Security expert, for a webinar on July 23rd, 10 AM ET Sign up here

May 2020 Updates

Here are some updates from the past month

Product

- Jira integration version 3.0.0 is live!

No matter if you are working on Jira cloud, Jira Server, Jira Data center or the “No-Plugin” integration version, with this new version, your work with Jira and PractiTest will be smoother - easier to configure, with added value features such as Filter import and more! Read more about using this integration here

-

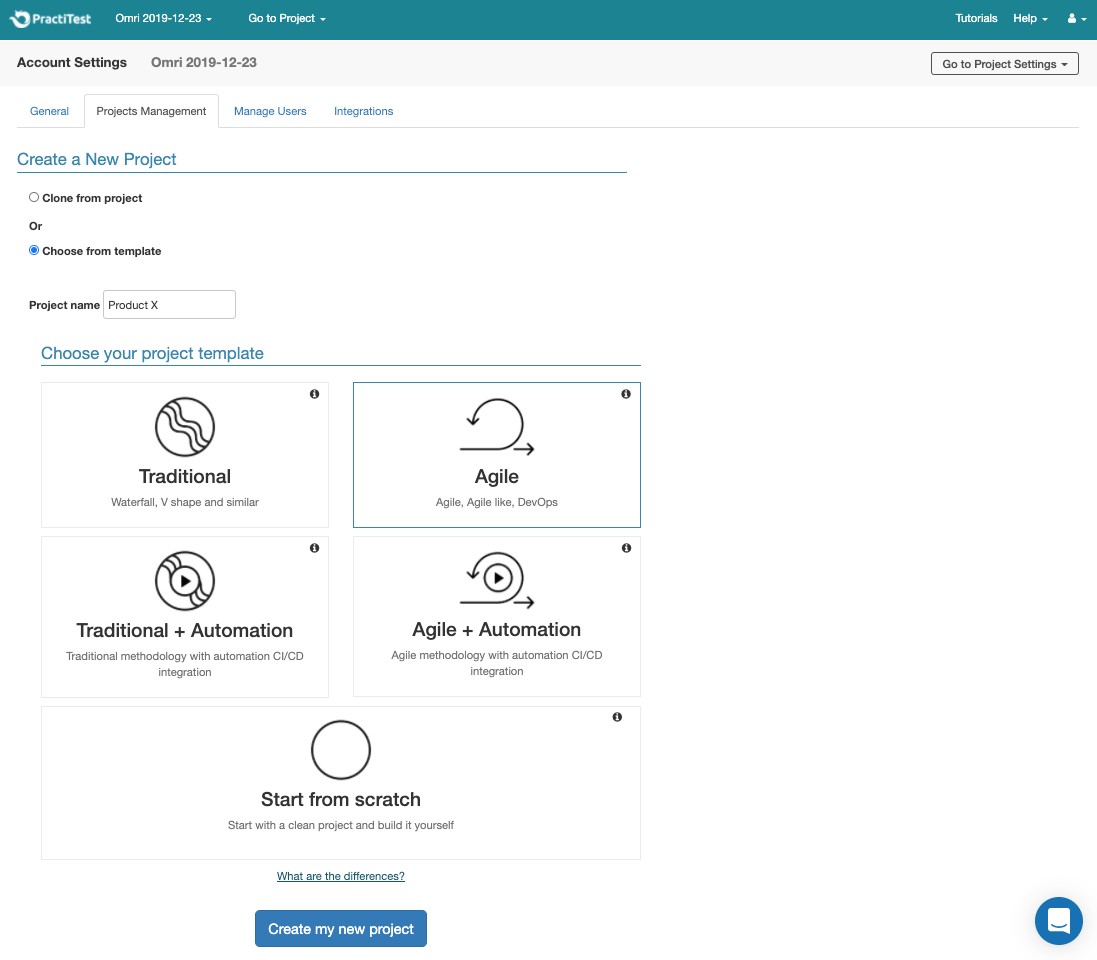

Project Templates

PractiTest now has the option to base a new project structure (fields, filters and dashboard items) on the methodology you choose - Agile, Traditional and/or Automation, in addition to the existing option to ‘clone a project’. For more information click here. -

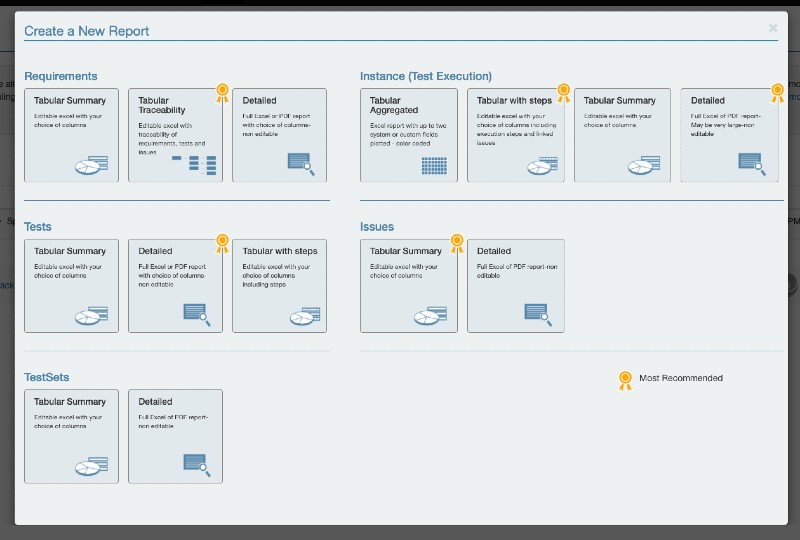

New Report creation GUI

Reports are not only an important tool in your testing toolbox, but also the face of your testing work when presented to others. This is why we made our report module clearer and more user friendly - now you can see and try all kinds of reports to help you do your work better!

- Test Run fields

PractiTest now has the option to add fields on the run level. Fields are at the heart of the PractiTest system. They not only define an entity, but they also let you filter entities, create specific dashboard items, reports and more. This means you can now define fields, like you do for any other entity, for test run.

PractiTest is on the Map!

- Spring 2020 OnlineTestConf with more attendees than ever before!

3 days of testing and QA lectures, covering 3 different time zones with over 4,500 registrants and lectures that broke the attendees number limit! Missed it? Let us know to invite you to the next event this Fall

Coming up

-

Joel Montvelisky hosting Peter Walen to discuss how to achieve quality with as little “testing” as possible.

Peter and Joel will discuss the topic from a holistic perspective and not by “injecting quality as a result of finding and fixing bugs in the finished product”. Going to be a blast. Sign up here

-

Kobiton’s Odyssey conference features Joel Montvelisky

Joel will talk about Modern Testing and how we can focus more on quality and less on testing. Check it out here

April 2020 Updates

Here are some updates from the past month

Product

- GUI enhancements to make your work easier

– Drag & Drop - easily upload attachments to PractiTest

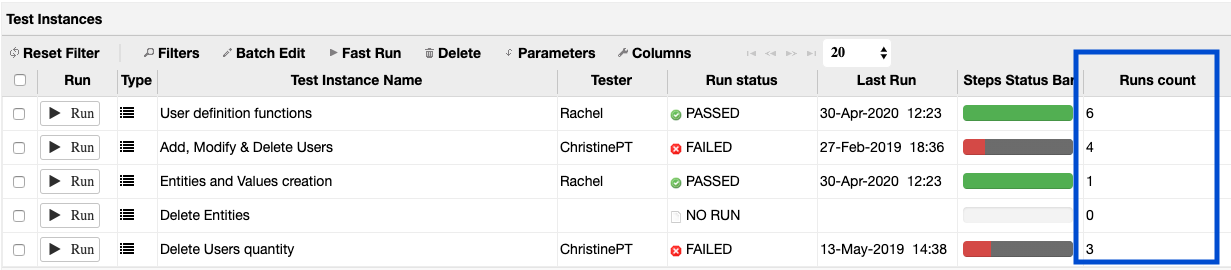

– Runs count field - We added a field in the Test Instance grid of each Test Set, to reflect the number of runs for each instance

-

API Authentication Options

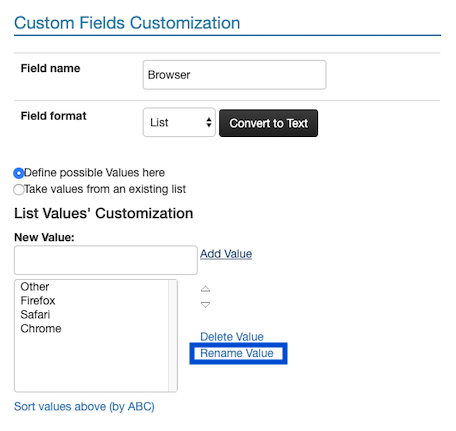

PractiTest API V2 supports three authentication methods: – Basic – Parameters – Custom header (added) Developer email is not required anymore. Read more here. - Modify list values

Rename your list field value using the settings

PractiTest for the Community

-

COVID 19

Due to the COVID 19 situation, we gathered some relevant resources that can help you do your work in this hard time.

Also, in support of the Leisure, Travel & Tourism industries, who have taken a hit by the COVID 19, we are offering 2 free months of PractiTest with no commitment for those industries!

-

State of Testing 2020 is published!

The 7th edition of the original longest-running QA & Testing report is out and is not only interesting, but also full of new insights.

Coming up

- OnlineTestConf, May 19th-21st,2020

3 Days, 3 Time zones, 12 speakers, over 4500 registrants from the entire world! Save your seat

March 2020 Updates

Here are some updates from the past month

Product

-

Auto filters

When creating a filter based on a ‘list’ type field, Auto filters allow you to automatically create sub-filters based on the field’s list values. The filter is synced to the field and any change in the field settings will be reflected in the filter as well. Read more about it Here. -

Annotation type customization for Exploratory Testing

From now on, you can edit, add, remove and define your own annotation values and give them a unique color.

PractiTest is on the map!

-

PractiTest Ranks as a momentum leader on the G2crowd test management tools Grid

G2, world’s largest tech marketplace, nominates PractiTest as a momentum leader in the test management category. -

How to manage teams forced to test remotely - article and webinar

Due to the COVID-19 global health restrictions, many teams are forced to function with remote testers. We gathered some tools and practices to manage and coordinate testing better in times like now. Find out more

Coming up

- OnlineTestConf, May 19th-21st,2020

The only testing conference that cannot be canceled by Covid-19 (CoronaVirus). The upcoming event will focus on testing challenges and success stories. Save your seat

February 2020 Updates

Here are some updates from the past month

Product

-

GUI enhancements

To make your work easier.

– Dashboard editing - Add and edit tabs and items quickly and easily with the new embedded edit icons on each graph.

– Filter management - Add and manage filters easily with clear buttons.

– Test type choice - Clearer differentiation between scripted and exploratory testing. -

Clone ONLY failed test when cloning test set

From now on, when cloning a test set (as a permutation as well) you will have the choice to clone only the failed tests in order to prevent repetitive work. -

IE Support EOL

From August 1st, 2020, PractiTest will not support Internet Explorer. Users with IE will be able to login, but some items might not work properly. We will continue supporting latest stable version supported of Edge, FF, Chrome, Safari.

PractiTest is on the map!

-

QA or the highway

Was great talking and presenting at the Ohio event, getting to know interesting people and sharing some of our knowledge. Looking forward to next time

-

PractiTest Labs

We had an interesting and super valuable session on how to manage automation in PractiTest. You can watch it Here. -

Cooperating with Broadcom

Joel Montvelisky, our chief solution architect, was featured on CA Rally (Agile central) Broadcom’s blog writing about The Need for Manual Testers in Our Agile Teams -

Tool review

And again we got an awesome review as a recommended test management tool by our friends at Codoid. So great to be appreciated by industry leaders.

Coming up

- How to improve waterfall projects webinar

Our monthly webinar with Joel is back. This month Joel will discuss “10 things to improve in your Waterfall projects that we can learn from Agile and DevOps” sign up here

January 2020 Updates

Here are some updates from the past month

-

Jira Server and Datacenter no-plugin integration:

This new integration option doesn’t require you to install anything, and allows you to easily configure two-way integration between the two systems. Learn More -

PractiTest Labs:

The first workshop session of PractiTest labs took place this month, discussing custom fields and filters, and it was a huge success. Thanks to all who participated, there’s much more to come!

We highly recommend everyone to watch the recording, since it will significantly help you to get more value from PractiTest. (Don’t say we didn’t tell you). Watch The Recording -

Creating test sets from tests import:

From now on you can create test sets that will contain your imported tests, directly from the import module. Learn More -

Update project’s history with API changes:

We added a new API parameter that will update the history sections (including the global history), with Requirements, Tests and Issues changes that were made using API requests. API Documentation -

New guide for using PractiTest with Automation via the API:

We created a detailed step-by-step guide for integrating your automation results from PractiTest using the API. Download PDF -

The 7th State of Testing survey is now live and awaiting your answers:

The final report is the most comprehensive out there in terms of software testing trends. If you didn’t have the chance to answer yet, you can still influence the report. Learn More -

QA or the Highway conference:

Come and join us at Columbus, Ohio on February 25th! We are sponsoring this wonderful event, in which Joel, our Solution Architect, will also be one of the speakers. -

PractiTest tool review by the software testing thought leader, Raj Subrameye.

Wait for it…

FireCracker UI:

This feature will be released shortly and will greatly facilitate the integration process with CI/CD frameworks. Stay tuned.