This guide aims to get you up to speed quickly with the PractiTest platform, ensuring you are aware of all the options that would make your PractiTest experience great, and save you loads of time in the process!

If you have any questions please make sure you let us know. You can use the widget at the bottom right side of the screen to reach out. We’d be happy to hear from you!

What is PractiTest?

PractiTest is a centralized test management platform to organize, run, and visualize all your QA efforts.

The platform allows you to easily reuse your tests, avoid duplication and unnecessary overhead, and record test results with ease. It will also save you time when finding defects, by producing the reproduction steps for you, and help you avoid duplicate defects and bugs.

The short video below covers the main flow of PractiTest.

Table of Content:

- Accessing PractiTest Projects

- PractiTest Modules and Navigation

- Test Library - Creating, Importing, and Updating Tests

- Test Sets & Runs - Running Tests and Recording Results

- Tracking Bugs and Issues

- Requirements and User Stories Management

- The Milestones Module

- Filters

- Additional Resources

Accessing PractiTest Projects

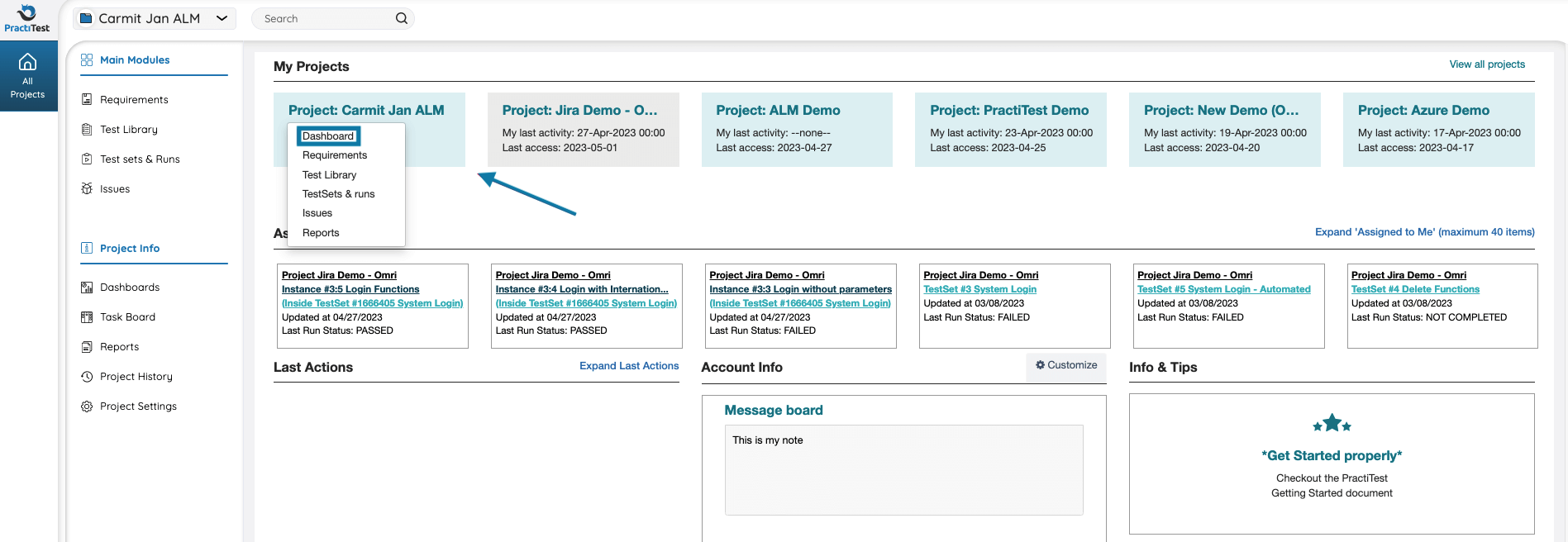

The first screen you see when login into PractiTest is the account landing page. The landing page shows all items relevant to you across all the projects in the ‘Assigned to me’ and ‘Last actions’ sections. From this page, you can access individual PractiTest projects.

To access a project, click on its name, and select the module you want to access.

PractiTest Modules and Navigation

PractiTest has five main modules:

- Milestones - the project management module enabling you to define high-level testing objectives with defined timeframes

- Requirements - is where system requirements and user stories are created and managed

- Test Library - is where test cases are created and managed

- Test Sets & Runs - is where tests are run and results are recorded

- Issues - is where issues, bugs, and defects are managed

You can access each one of the modules from the main navigation menu.

In addition to the different modules, you can also access the project info sections of PractiTest via the Info center at the bottom of the navigation bar:

- Dashboards

- Reports

- Task Board

- Project History

- Project Settings

From the bottom left side of the main navigation bar, you can access your personal settings and PractiTest tutorials.

Test Library - Creating, Importing, and Updating Tests

Tests Creation and Management

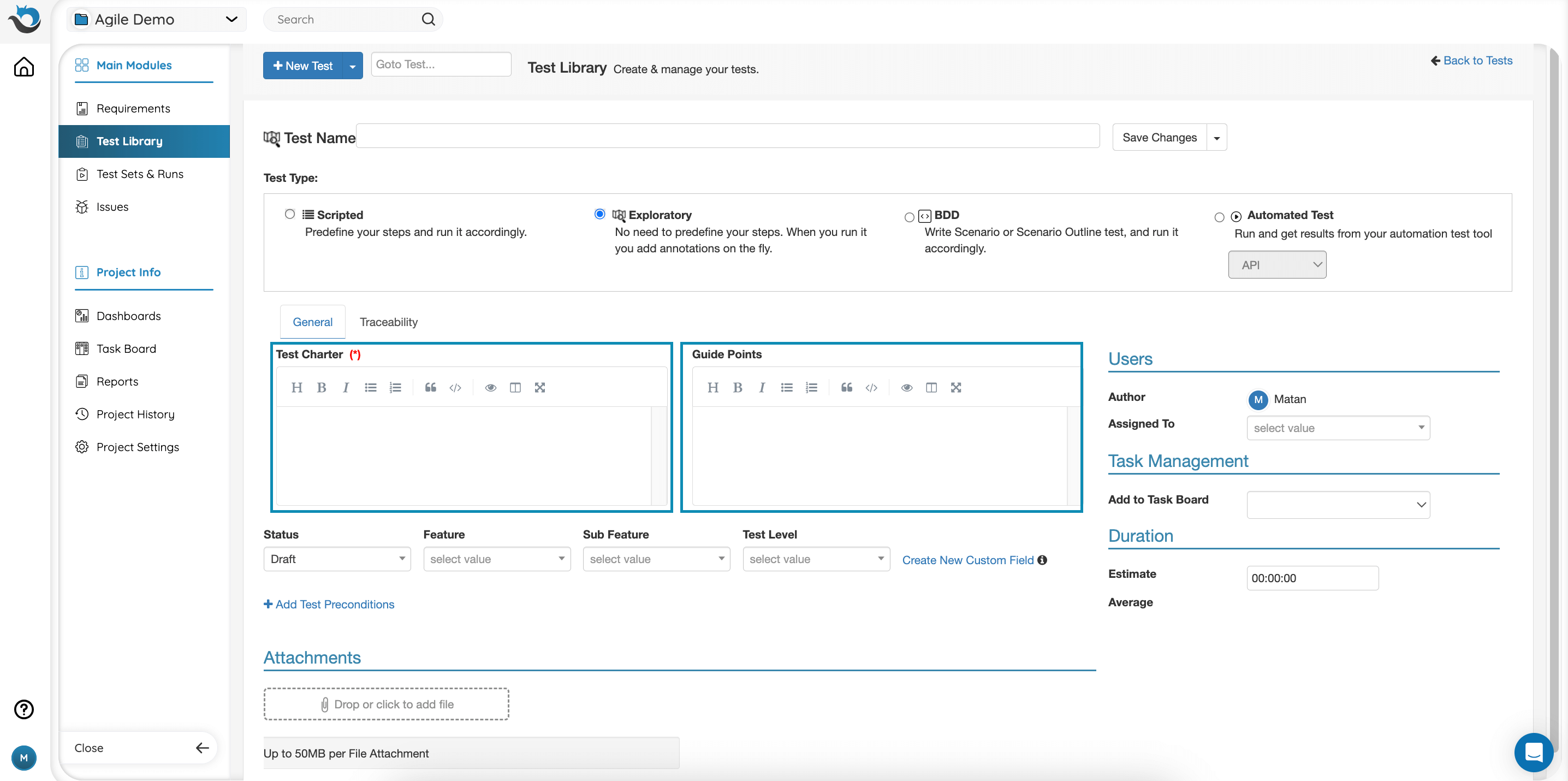

There are four types of tests available in PractiTest:

- Scripted - manual test with predefined steps

- Exploratory - manual test without predefined steps

- BDD - Behavior-Driven Development test based on the concept of “given, when, then”

- Automated - automated test in which results are updated automatically

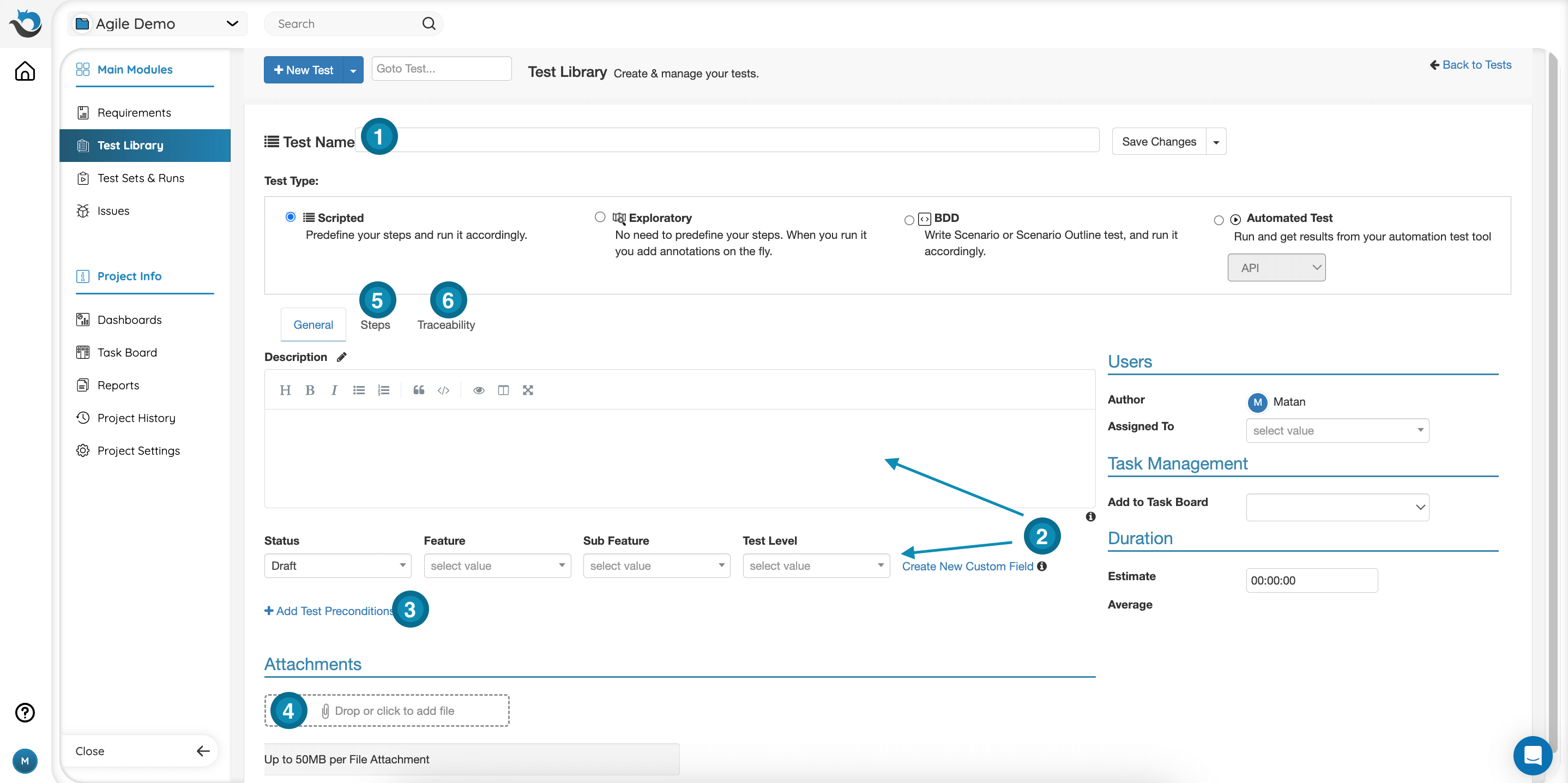

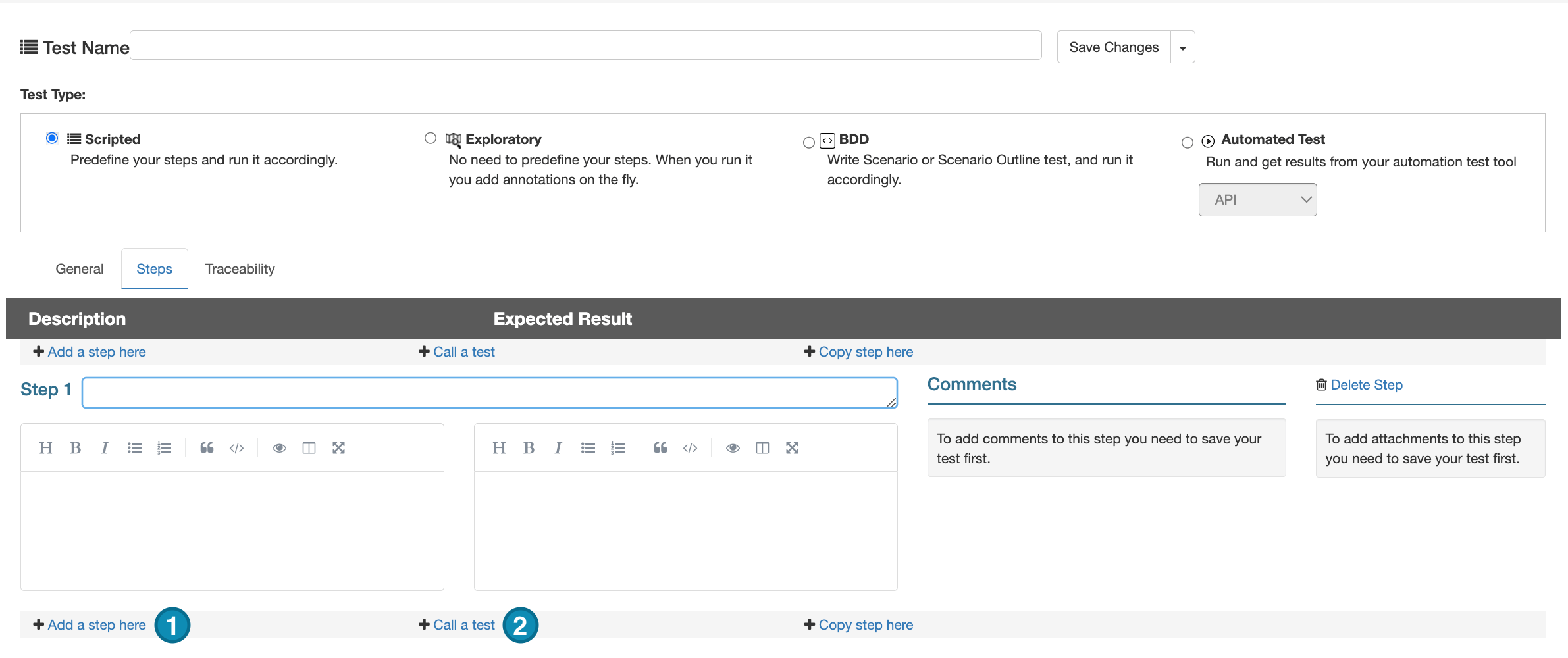

Creating Scripted Tests

- Test Name - the name of the test case you are creating

- Test Description & Test Fields - describe your test in the description box, and fill in the fields below based on the test information

- Test Preconditions - if needed, add preconditions for your test by clicking the ‘Test Preconditions’ link

- Attachments - drag and drop attachments in the designated area at the bottom of the page

- Steps Tab - access the steps tab to add steps information

- New Step - adding a new step

- Call to Test - this option allows you to call steps that were already defined in another test, into the test you are now creating. To call steps, click here and type in the ID of the existing test

- Traceability Tab - Link your test to Requirements and Stories from your Requirements module to create test coverage for them. From here you can always keep track of issues linked to this test and its previous runs

Each step consists of 3 fields - name, description, and expected result.

Comments and Mentions

For every test, you can also add comments and mention other teammates using an ‘@’ sign. The teammates you mention will be notified via email.

Exploratory Tests

Exploratory Tests have a ‘Charter’ (mission for the session) and ‘Guide Points' boxes instead of a description and steps information. When running exploratory tests, annotations of multiple types can be added on the fly, recording the tester's findings during the session. Since it is a session-based testing, each Exploratory Test can only run once. From an Exploratory test run, you can then also create a scripted test and reuse it in test sets. Learn more about it here.

BDD Tests

BDD test is written as a scenario or as a scenario outline. When choosing BDD tests, you can use the common Gherkin syntax in the added Scenario field. Each Gherkin row you add will become a step when you run it. If you use Scenario Outline, each example will be added to the test set as a separate instance.

Read more about BDD tests here.

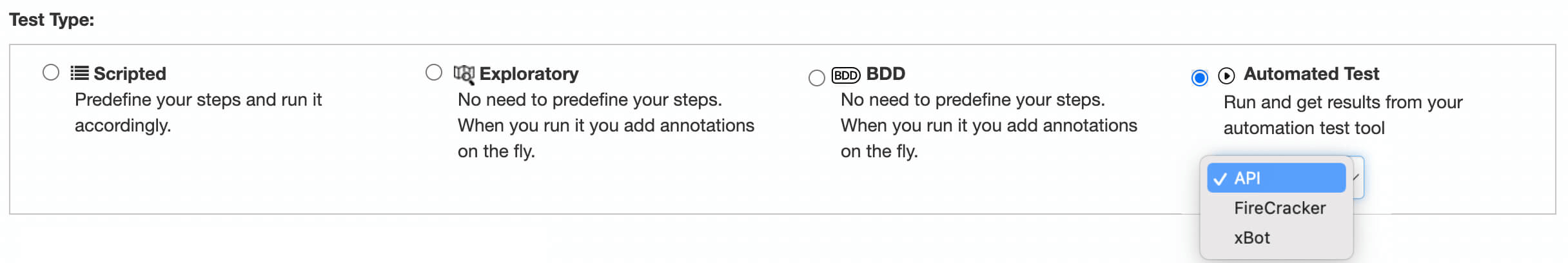

Automated Tests

Tests which run automatically and update PractiTest using one of the following ways:

- API: reporting automation results using the REST API

- FireCracker: automatically converts XML results files received from CI/CD tools to PractiTest tests and runs

- xBot: - an internal automation framework to run, schedule, and execute tests. When choosing any of the automation-type tests, you can add the description in the general tab and an “Automation Info” tab will appear where you can write down your Automated Test Design and the Script Repository.

Read more about Automated tests here.

Importing Tests from Excel

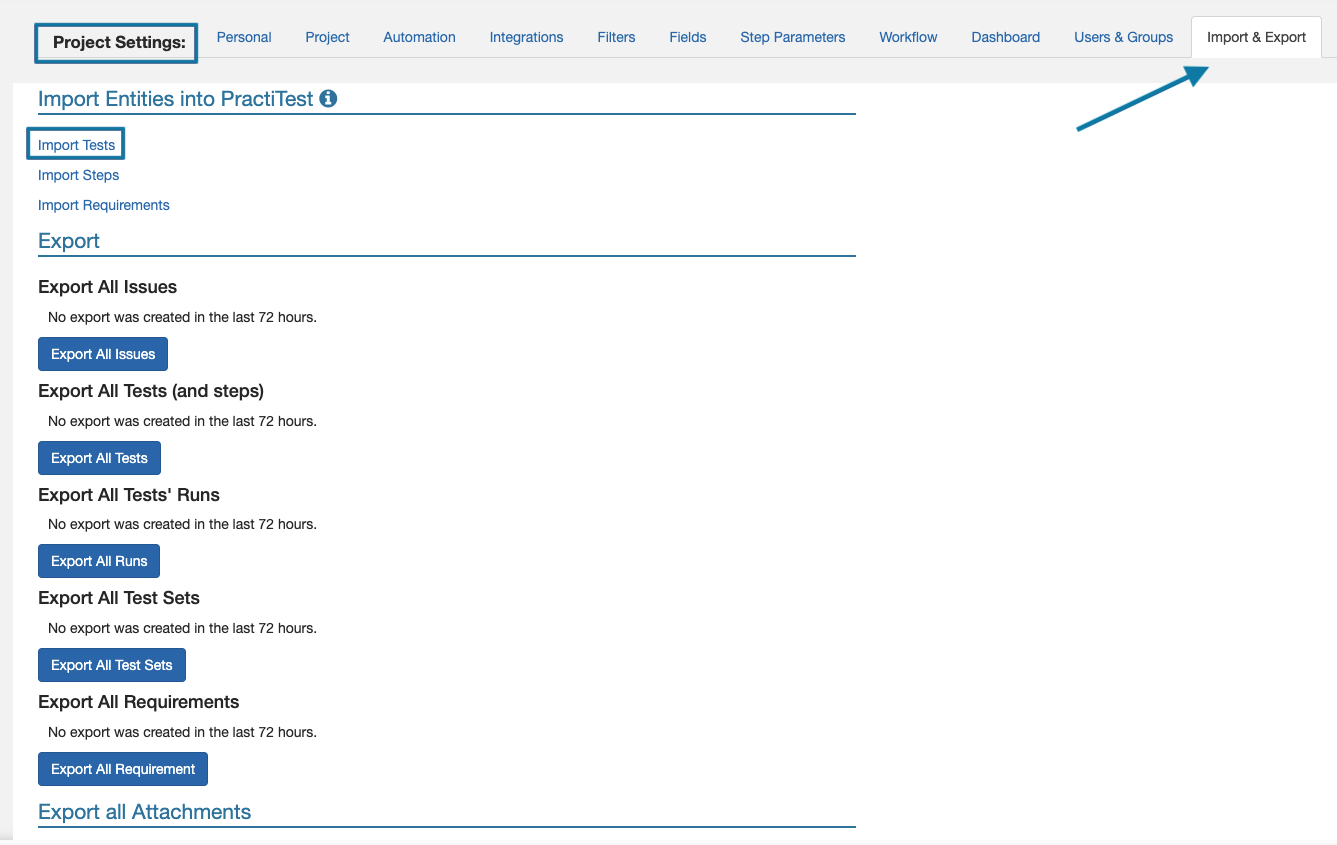

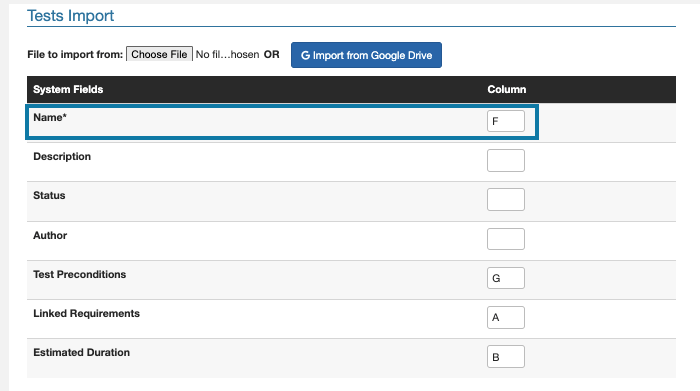

To access the import page, click on Settings - Import & Export. Then, click on ‘Import tests’.

On the import page, you need to map the columns of your spreadsheet, to the fields shown on the page.

For example, if test names are listed in column ‘F’ of my spreadsheet, I will replace the letter A with an ‘F’.

Please note: if your spreadsheet contains a row of headers, you will need to tick the ‘ignore first row’ box at the bottom of the page.

You can find the full import guide here.

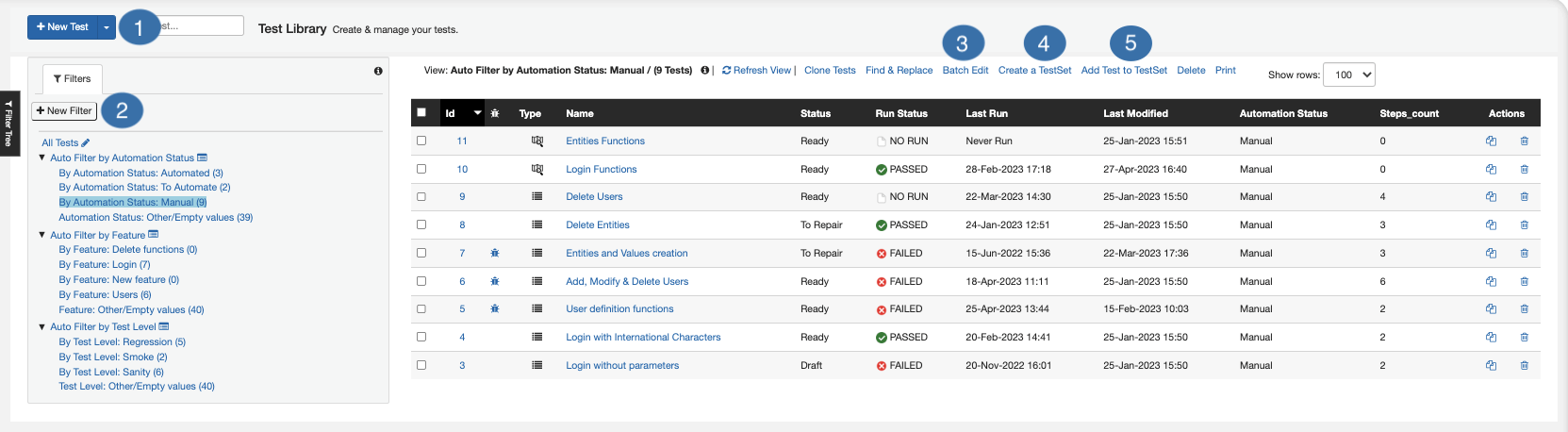

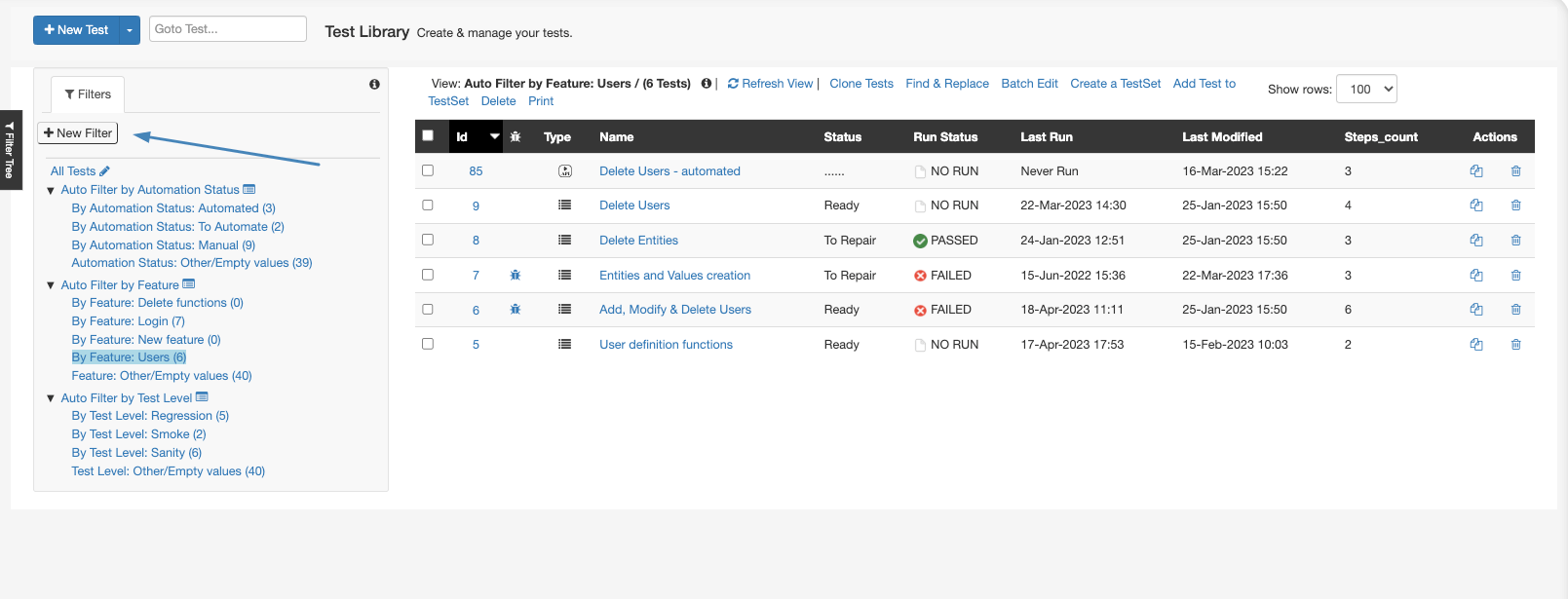

Test Library Overview

- Creating a new test - create a new test in the test library

- Filters section - filters allow you to focus on a specific group of your tests. From this section you can use existing filters, and create new ones

- Batch-Edit - edit the information of more than one test in one operation. Select the tests you want to edit, and click batch edit to edit their information

- Create a TestSet - create test sets directly from the Test Library. Test sets will be discussed in depth later on in this guide

- Add test to TestSet - add tests to existing test sets in the Test Sets & Runs module

Test Sets & Runs - Running Tests and Recording Results

Test Sets & Runs is where test execution is taking place, and where you can record test results. Test Sets, also known as Test Suits, are a group of tests that are planned to run together for a certain purpose.

Test Sets allow you to re-use your tests as many times as you need, in order to make your process more efficient.

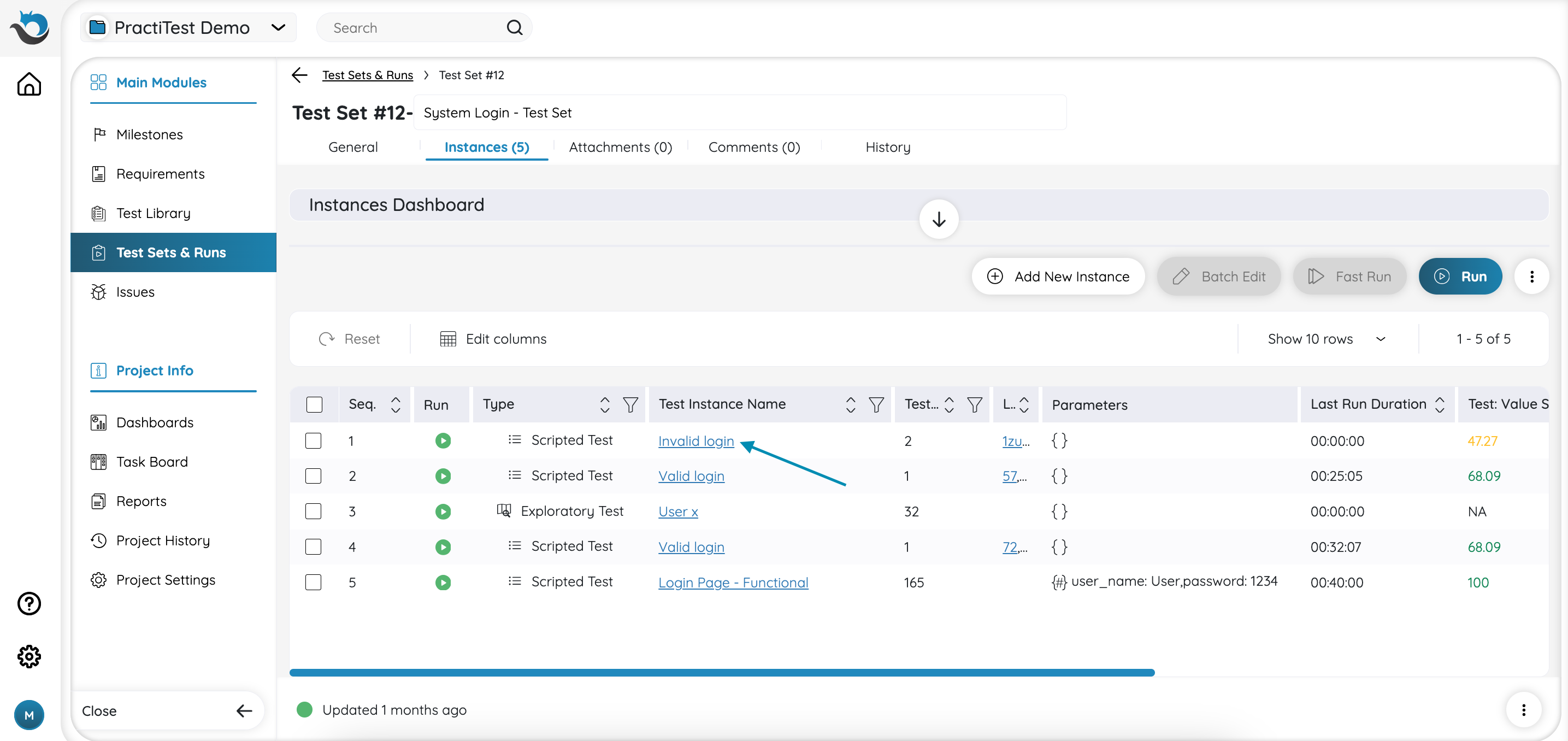

Test Instances

When adding test cases to test sets, copies of the original tests in the library are created. These are called in PractiTest ‘Test Instances’. This approach allows you to reuse tests from the library multiple times in different test sets and run them for different purposes.

When the information of a test is updated in the test library, all corresponding test instances are updated accordingly. This allows you to maintain a single version of a test case, and ensure you run the most recent version of a test case at all times.

Each test instance can run multiple times (except exploratory testing, which can only run once), and each of its previous runs is preserved and can be accessed in the future. This way, you can keep a record of test runs made before updates were made to the test information, and review historical test executions of every test instance.

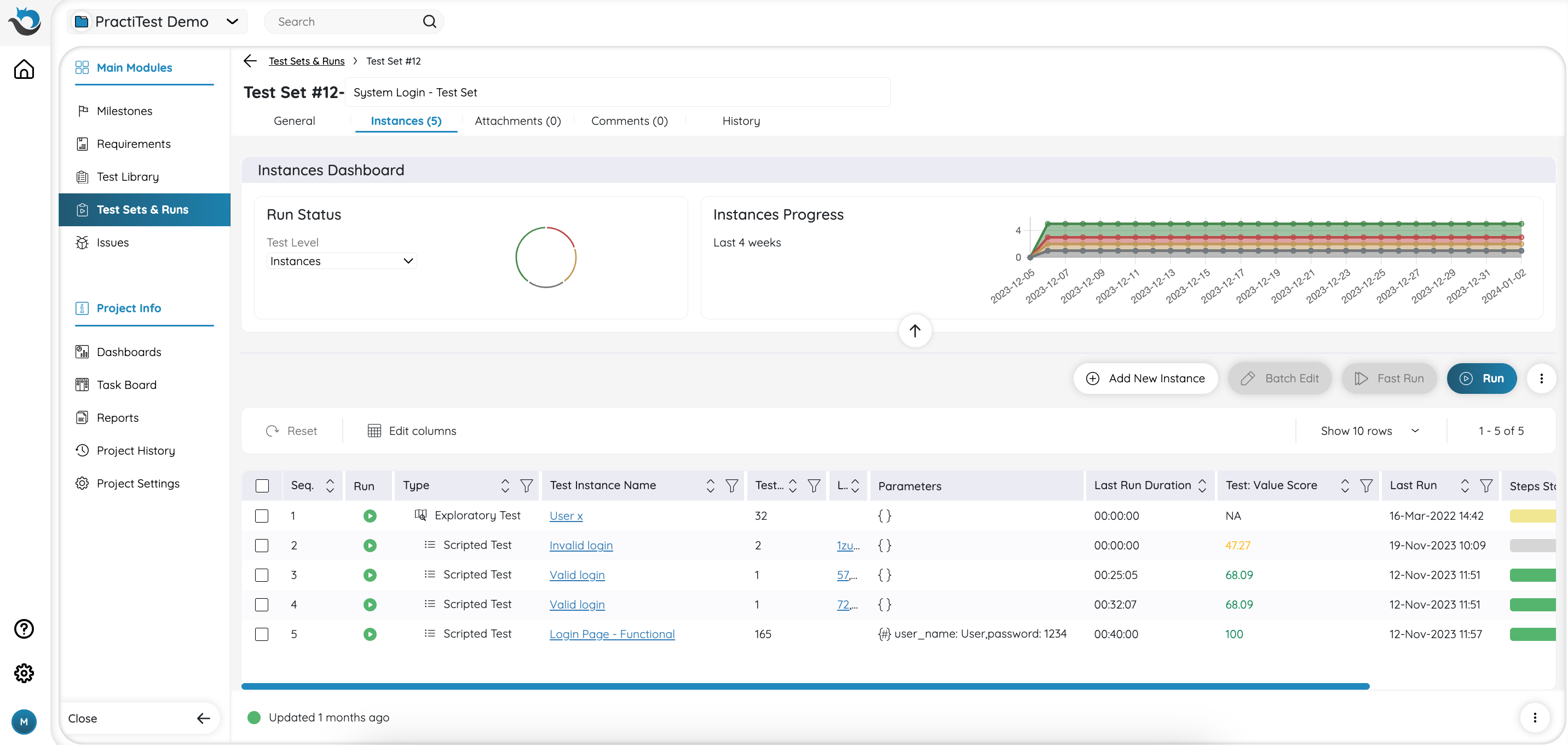

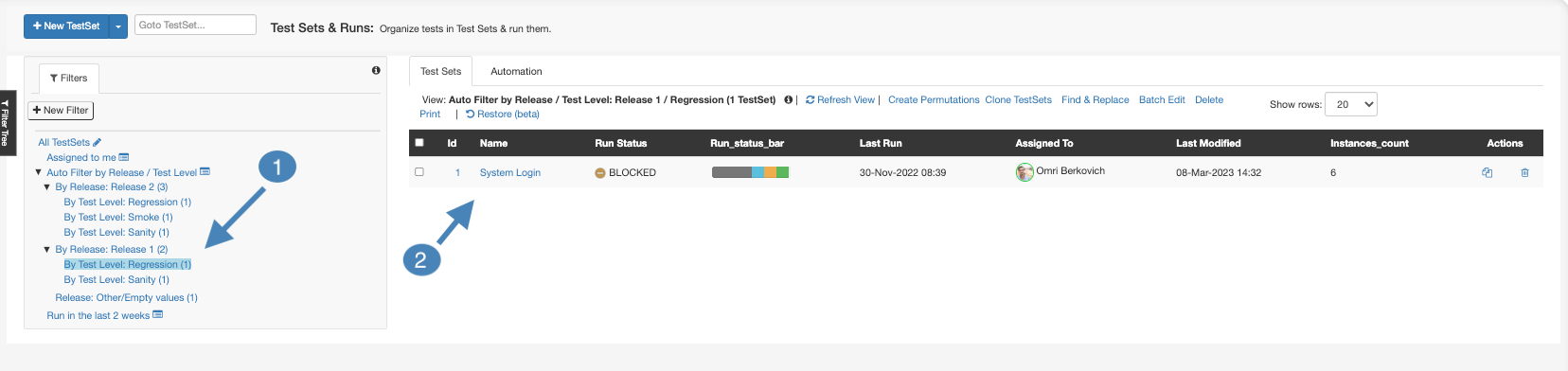

Accessing a Test Set

- Test Sets Filter Views - on the left side of the screen, you can find all filter views that currently exist in your project. To narrow down the list of ‘Test Sets’ you see in the main view, access a relevant group by clicking on a filter. For example, if you were assigned with running regression for Sprint 23, find the ‘Regression’ filter, under ‘Sprint 23’, and click on it.

- Accessing a Test Set - after accessing the filtered view, find the Test Set that is assigned to you, and click on its name to access it.

Note: If you don’t have existing filters in your project, learn how to create them here.

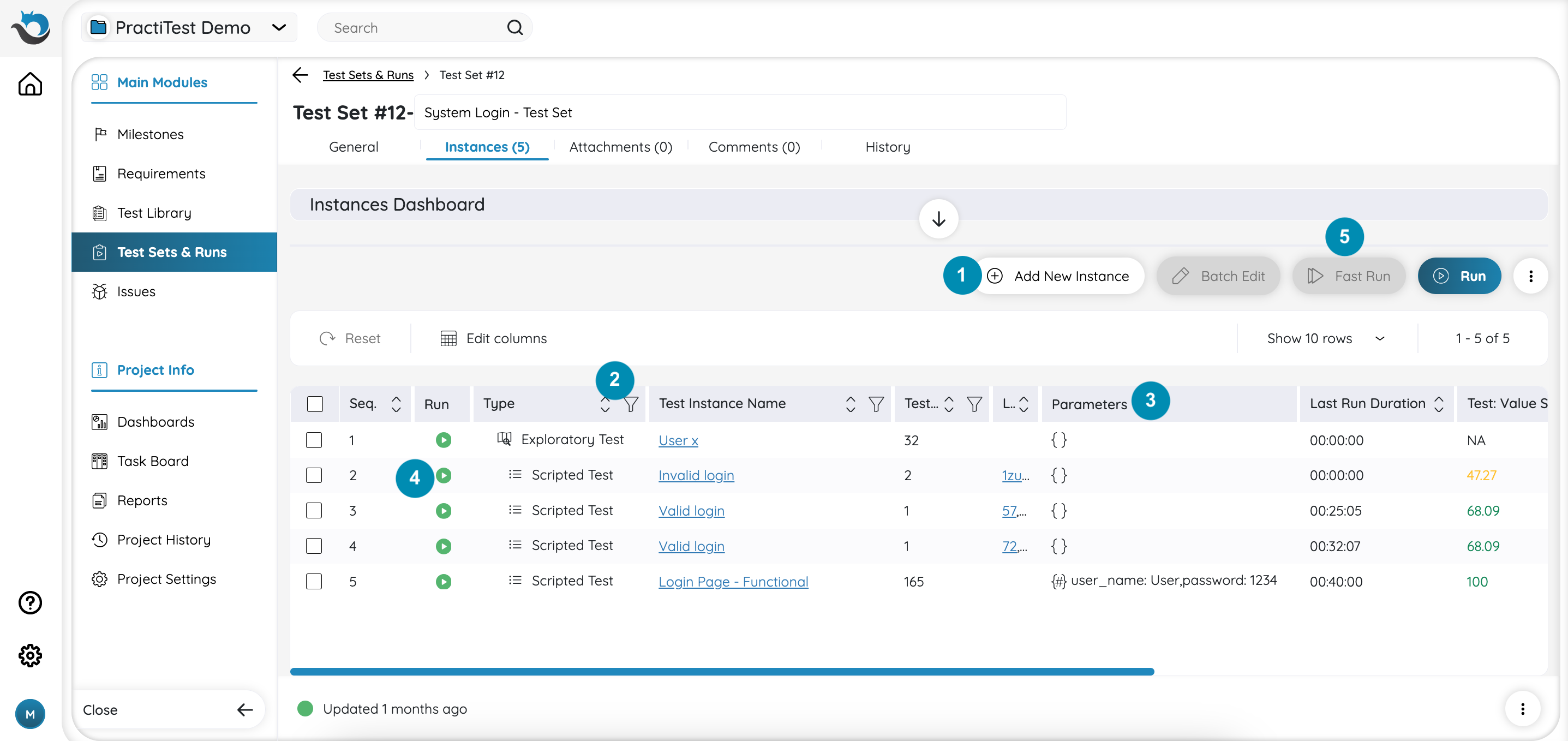

Test Set Options

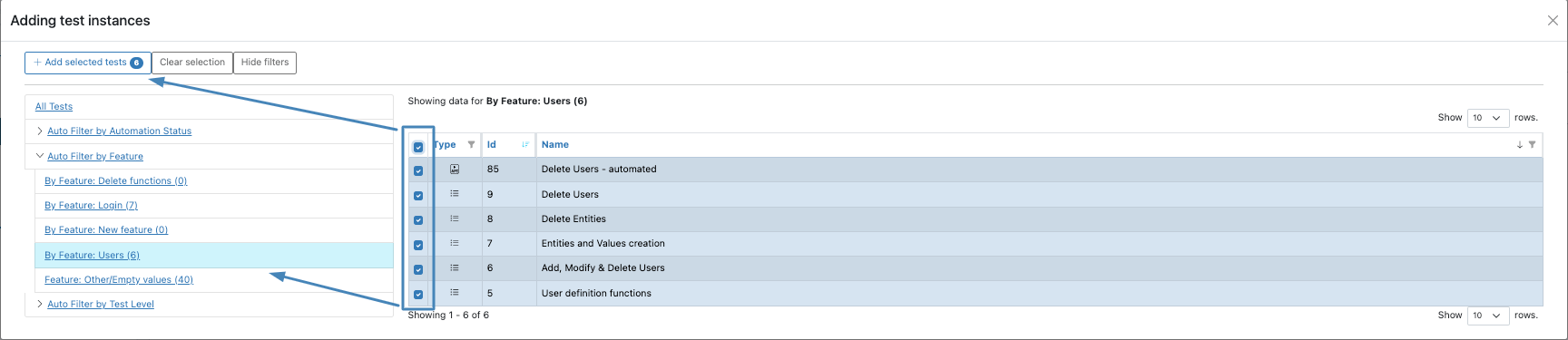

- Add Test Instances - Add tests to the test set as test instances. Use the Test Library filters, shown on the left-hand side, to narrow down the view

- Fast Filters - Click on the filter icon next to a column to filter the view based on an attribute

- Columns - You can customize the columns shown in this grid by clicking the 3-dot button at the top right side of the grid, and choosing 'Edit columns'

- Run - Start a new run for a test instance

- Fast Run - Quickly assign test instance with a status

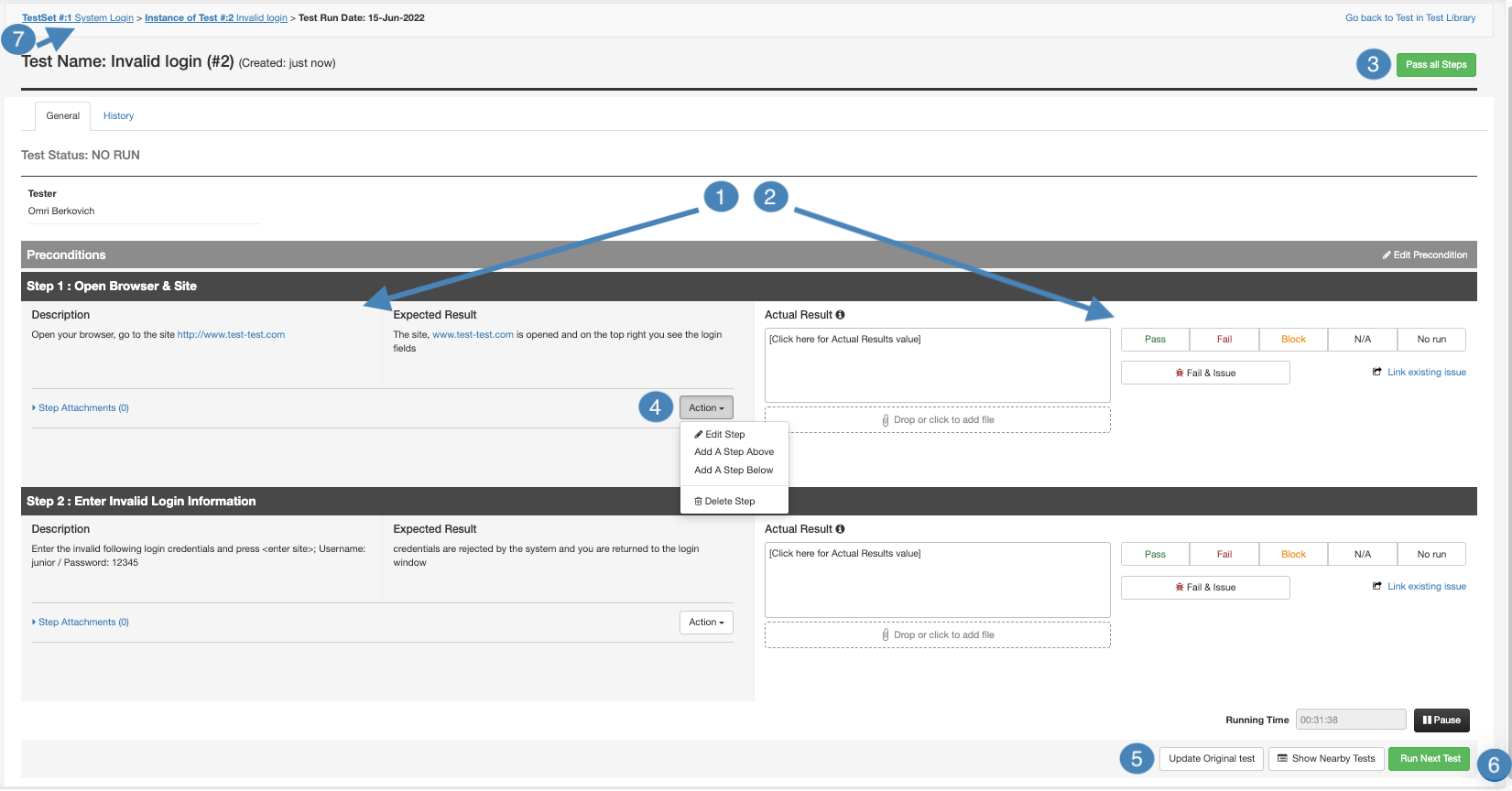

Test Runs

- Steps Information - here you can find the steps information to verify when running the test, including step name, description, and expected result

- Actual Results - this is where you record the result of the steps. type in the actual result of the step in the ‘actual result’ box, select a status for it from the status bar and add attachments if needed

- Fail & Issue - use the Fail & Issue option to report a bug directly from the step if needed. Using this option, the step will be set to Fail, and the description of the bug will be prefilled with the information of the steps that led you to find the bug, saving you time filling this out again

- Link Existing Issue - if the bug you found was already reported previously, use this option to link it to a step

- Pass All Steps - assign all steps in the test run with the status 'PASSED'

- Steps Actions - modify steps information, add additional steps, or delete a step

- Update Original Test - when making changes to your test, you can use this option to update the original test in the test library. Using it will ensure your changes apply to future runs of your test. Otherwise, your changes will only apply to this specific test run.

- Run Next Test - proceed to run the next test in the queue

- Go Back to Test Set - by clicking on the Test Set name in the breadcrumbs

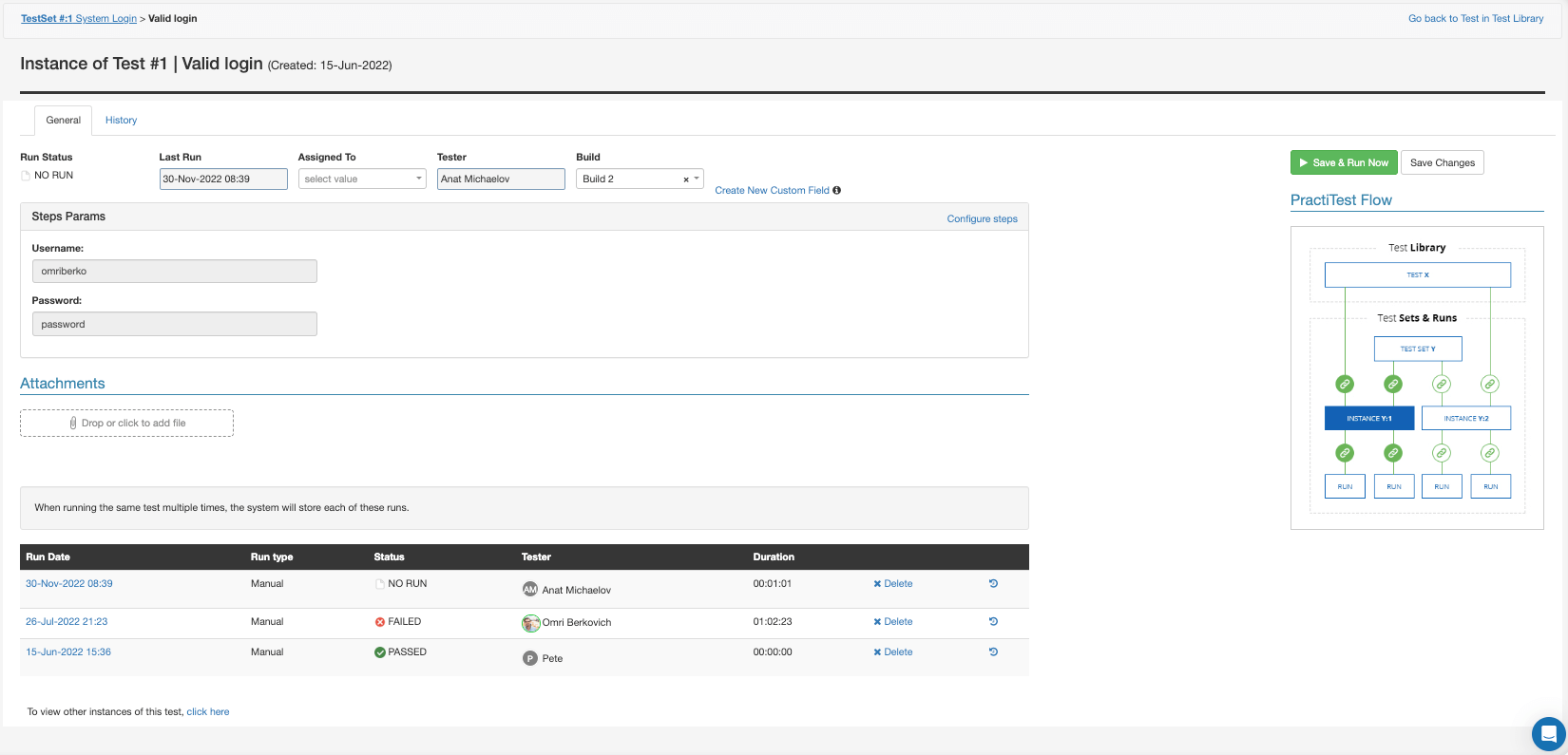

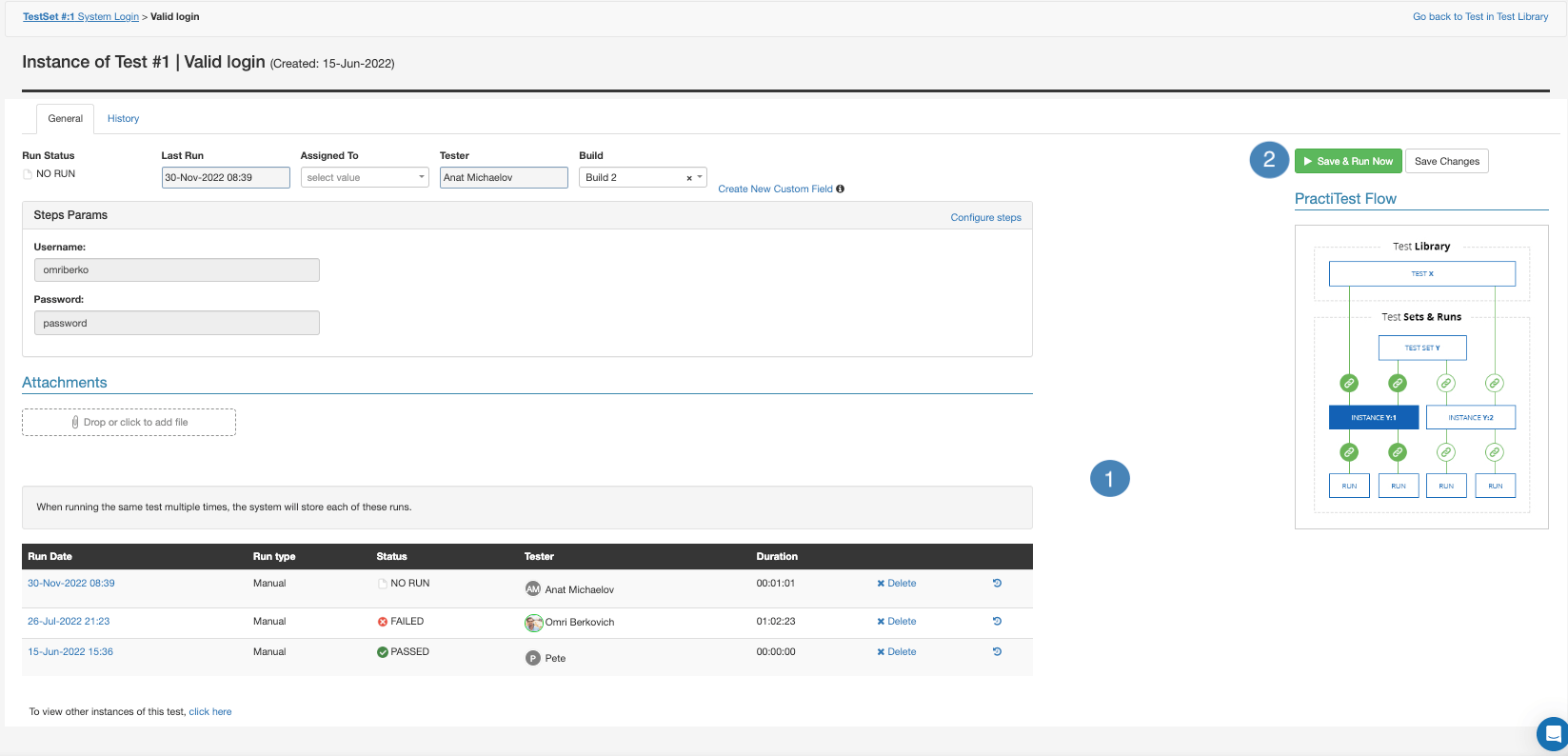

Test Instance Screen

To see more information about a test instance, including its previous test runs information, click on the instance name from the test set page.

- Runs History - here you can see a summary of all the previous runs of this test instance

- Save & Run Now - start a new run for this test instance

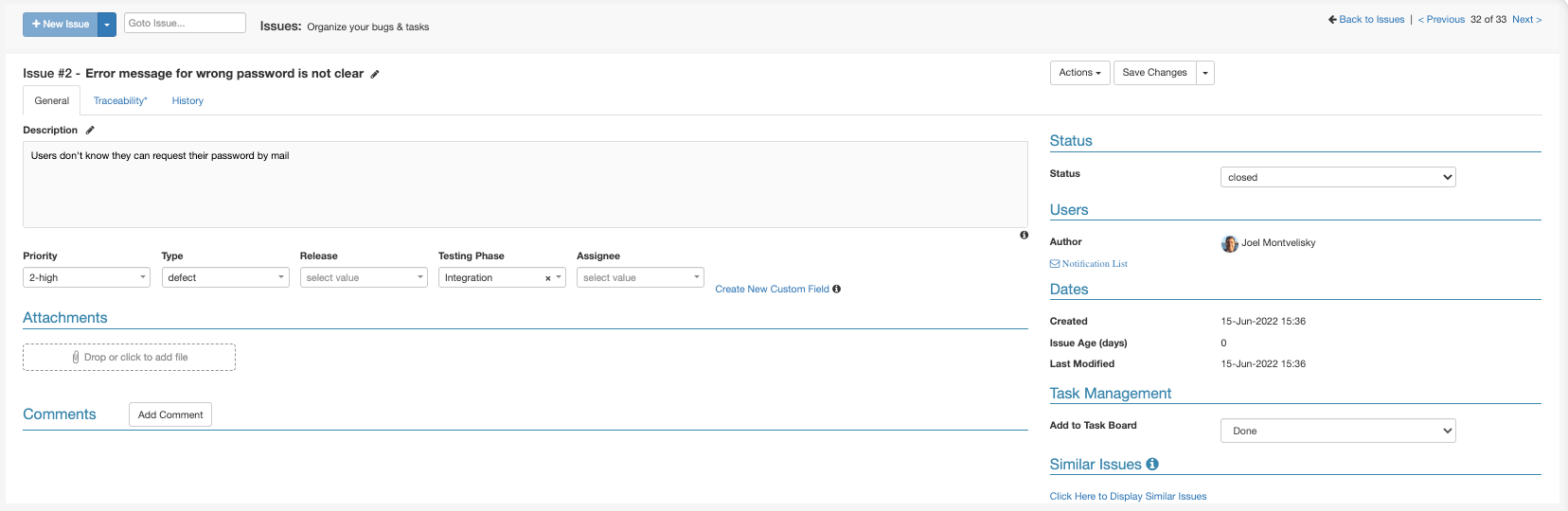

Tracking Bugs and Issues

Issues/Bugs/Defects are managed in the Issues module. Bugs and issues that are recorded during your testing are created in the Issues module.

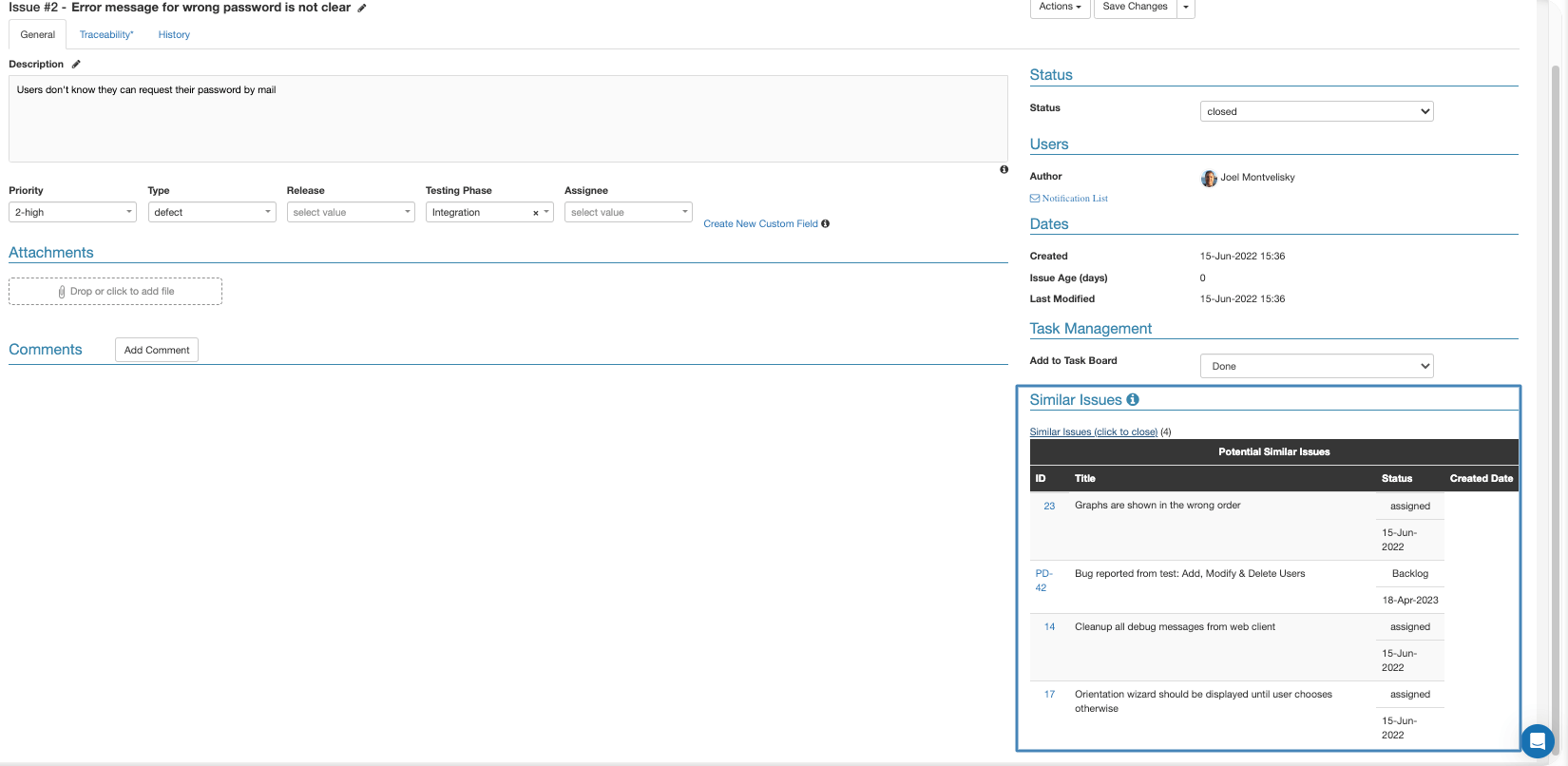

When creating a new issue, you can check if you already have a similar issue by clicking the display similar issues link after you start typing the issue name.

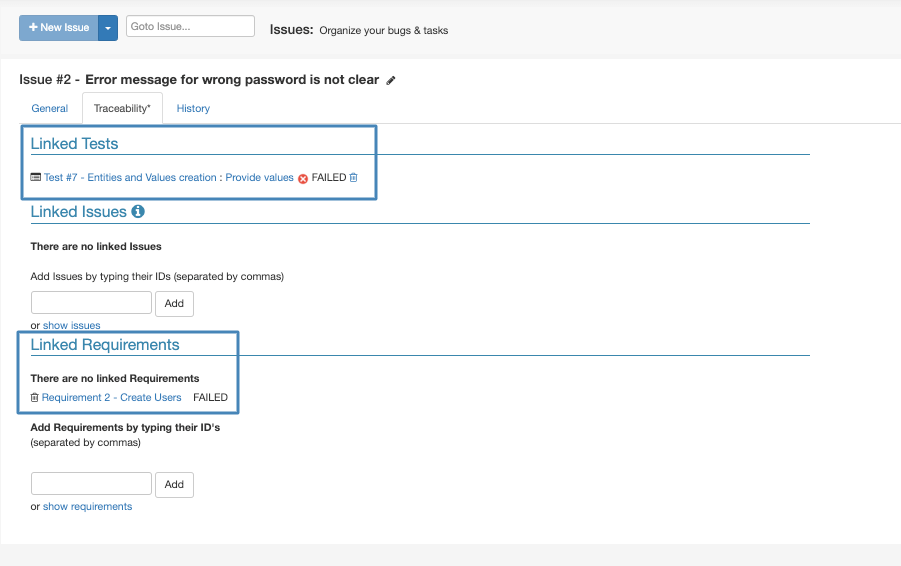

The traceability tab of the issue is where you see the linkage between the issue to tests and requirements.

Comments and Mentions

Just like with tests, you can add comments to your issues and mention other teammates using an @ sign. Teammates you mention will be notified by the system via email.

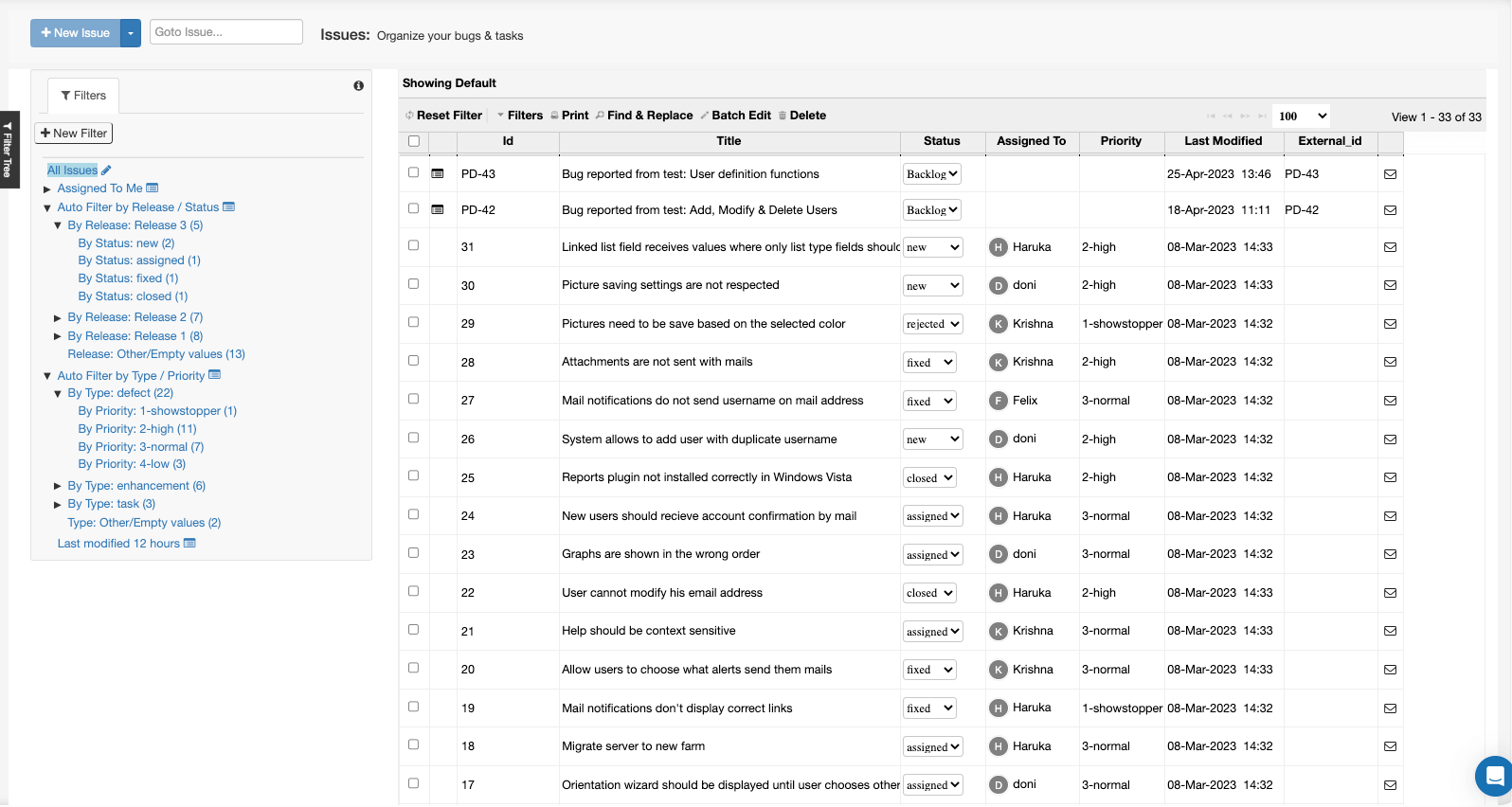

The Issues Module

From the issues module, you can access all the issues that exist in your project. You can also see in a quick glance what is the status of the issue from the ‘Status’ field. To narrow down the list of issues you see in the main grid, you can use the existing filters created on the left, or create new filters. For example, if you want to see which issues are ready to be re-tested, you can create a filter that shows all issues with the ‘Fixed’ status.

Issues Integrations - Jira, ClickUp, Azure DevOps

When your projects are integrated with an external system such as the ones mentioned above, issues you report from test runs will be created directly in the external system. In these cases, a new issue will still be created in your PractiTest issues module as well. The issue created on the PractiTest side is linked to the ticket on the other platform. Every change made to the name, description, or status of the issue will reflect in the corresponding issue ticket in PractiTest. This allows you to keep track of the progress that is being made in the other platform directly from PractiTest, and identify when issues are ready to be re-tested.

Requirements and User Stories Management

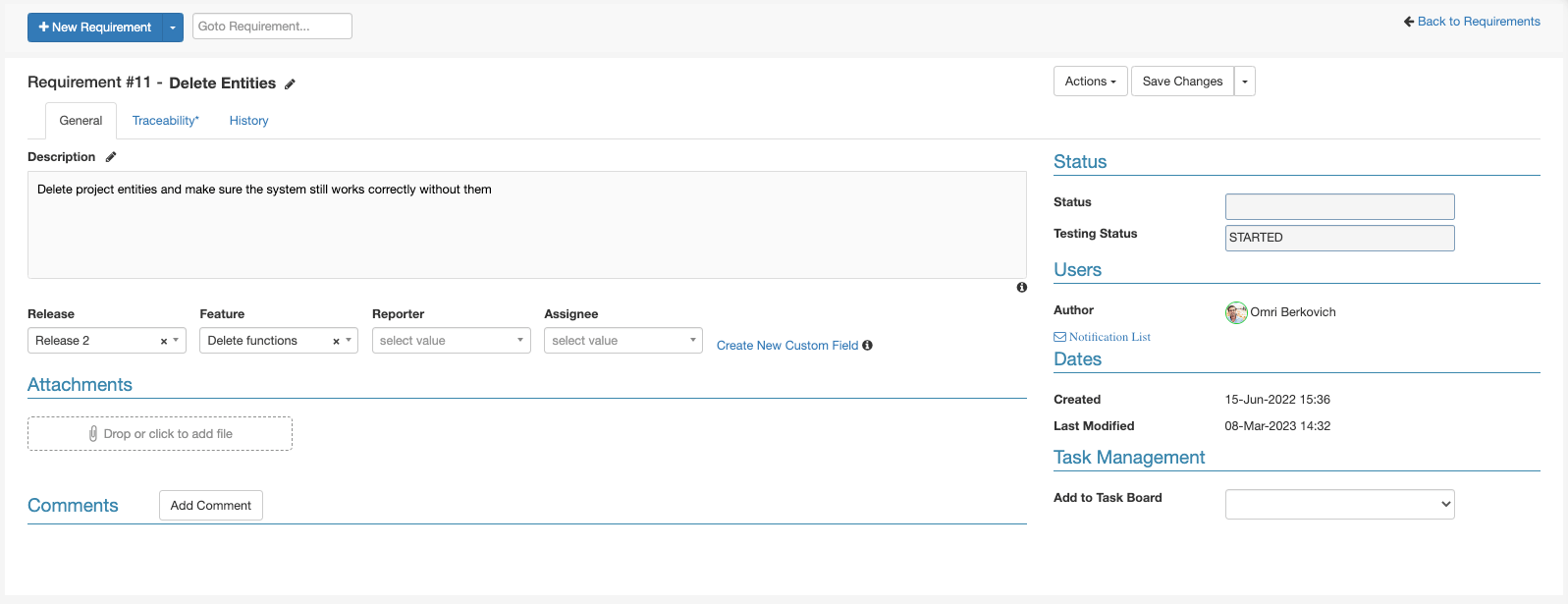

Requirements and user stories are managed in the Requirements module. The requirement ticket contains all the relevant information about the requirement in the ‘General’ tab.

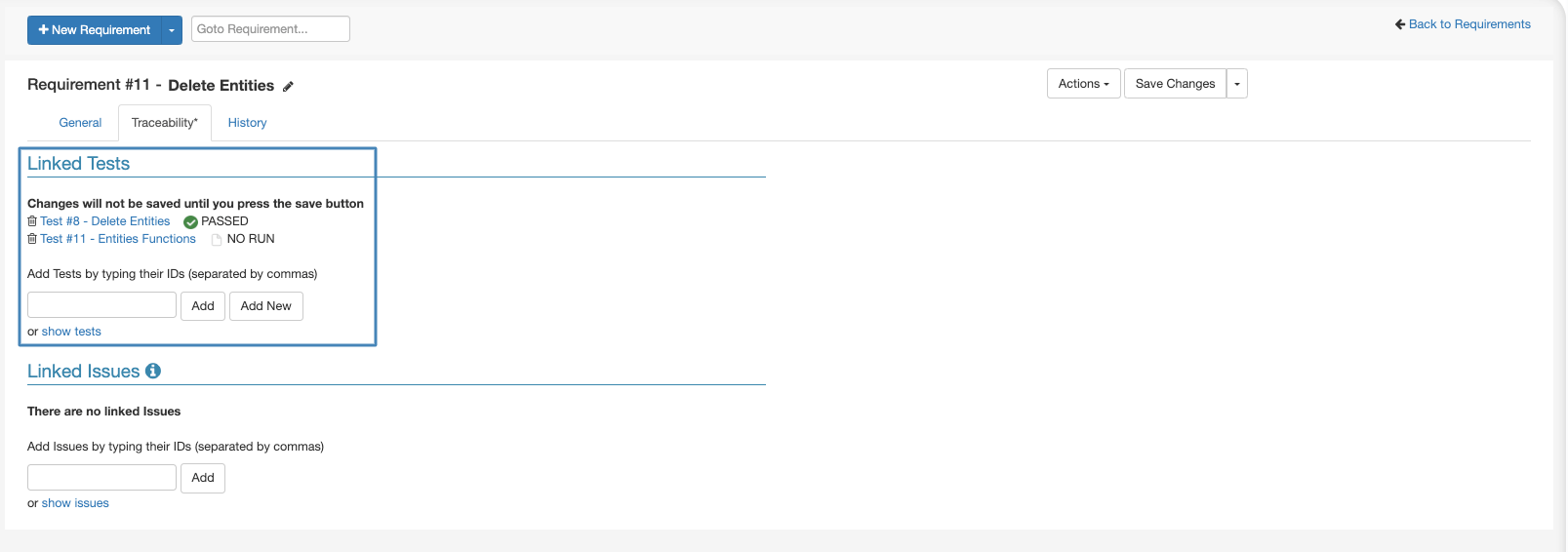

You can see what tests are linked to your requirement or link tests to it from the traceability tab of the requirement ticket.

Requirements Status Field

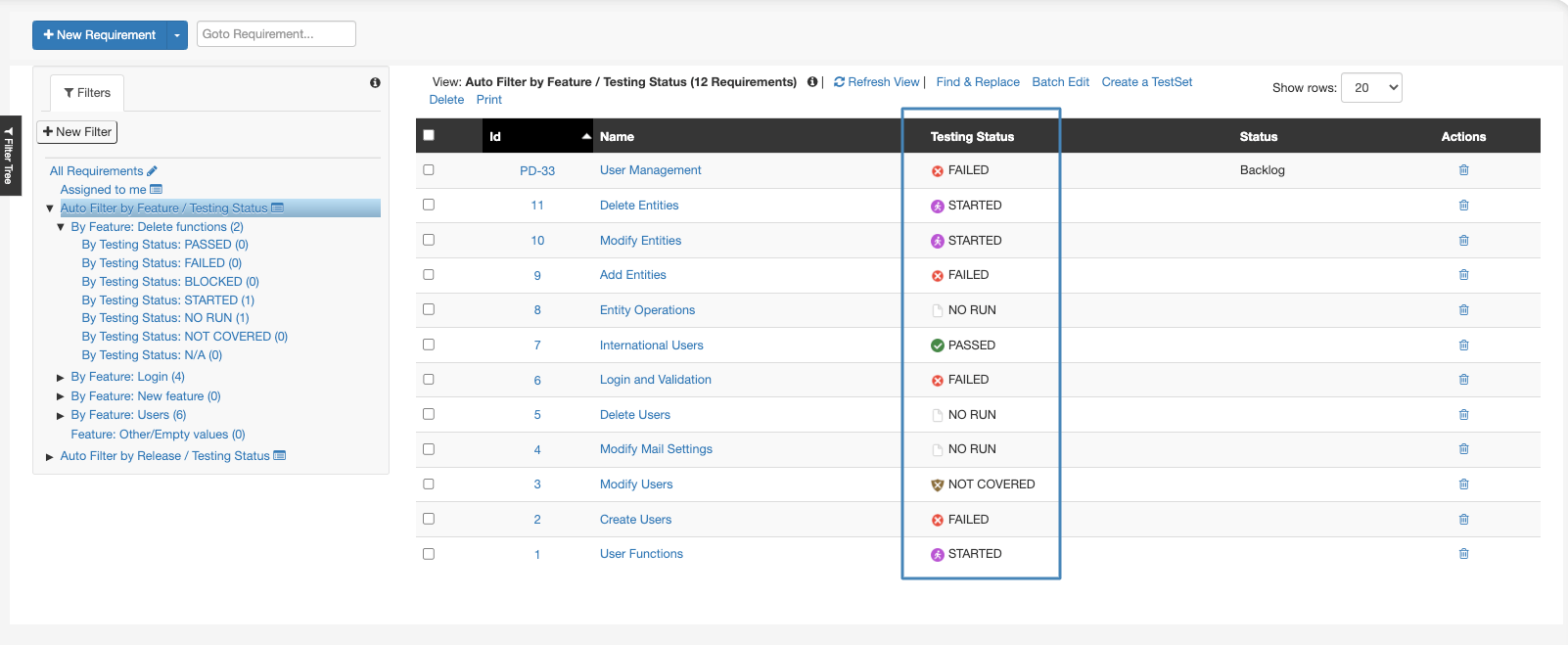

The ‘Testing Status’ field in the requirement will be populated automatically based on the status of the tests linked to the requirement. This allows you to quickly see the testing status of the requirement and the progress made on it.

Below you can find the criteria for each status:

NOT COVERED – There are no tests linked to this Requirement.

NO RUN – There is at least one test linked to the requirement. None of the linked tests ran.

STARTED – There is at least one test linked to the requirement that started running. None of them Failed or was set to Blocked.

PASSED – All linked tests ran and passed.

BLOCKED – At least one of the linked tests was set to blocked. None of the linked Tests Failed.

FAILED – At least one of the linked tests failed.

N/A – All tests linked to this requirement are marked as N/A.

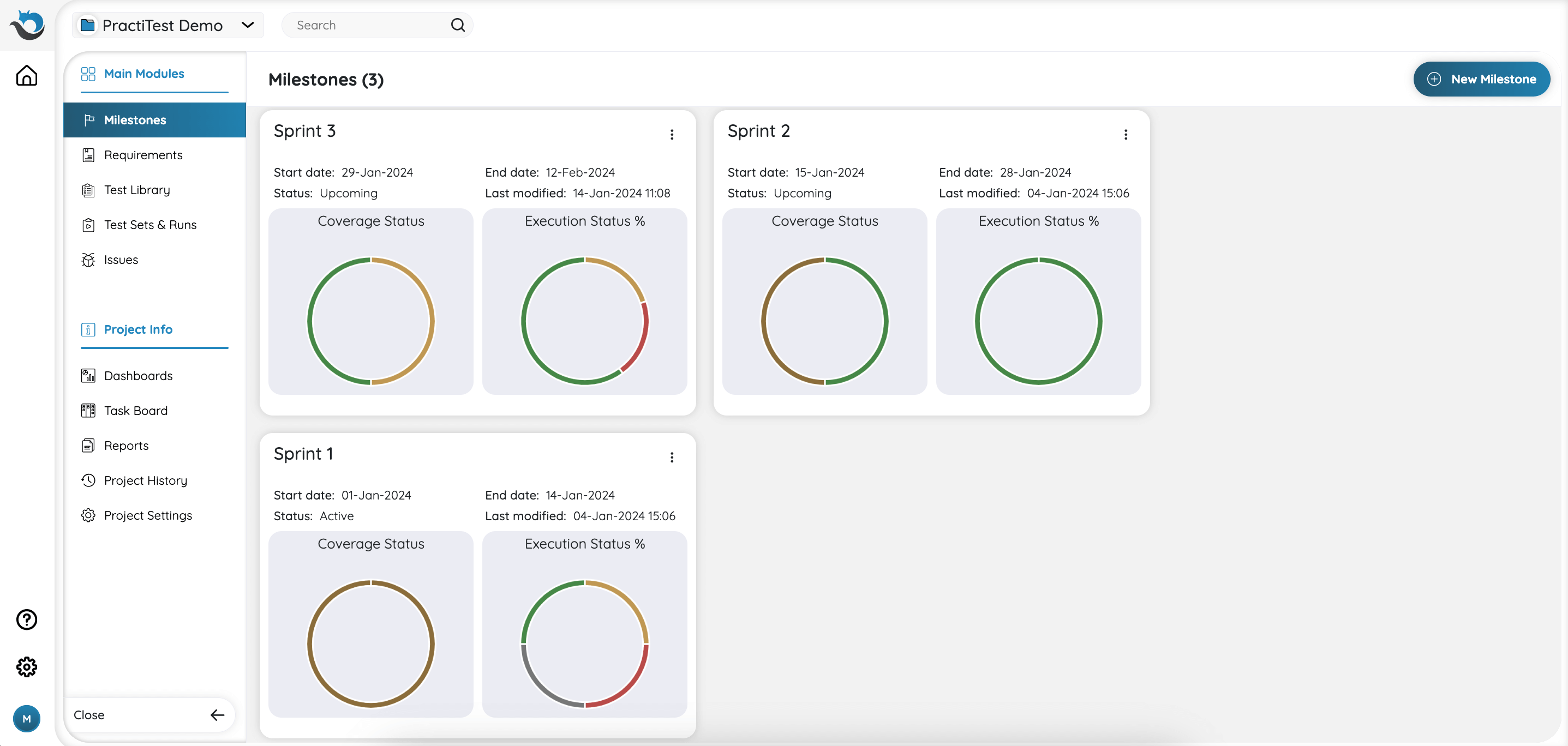

The Milestones Module

The Milestones module within PractiTest serves as a project management module that allows you to establish higher scope testing goals within specified timeframes. It offers a convenient and efficient way to track the overall project status and monitor its progression.

You can use Milestones when launching new software releases across various versions, organizing testing activities around agile sprints, or testing various sets of products.

Within each Milestone, you can associate relevant testing elements such as Requirements, Test Sets, and Issues, ensuring seamless accessibility and management of all components within the scope of the milestone.

Filters

Filters is a very powerful tool that allows you to create customized views in each of PractiTest’s main modules based on dynamic queries and fields information. By creating filter views, you can focus the list of items you see in each module, on a specific group of items, instead of going through an endless list of items.

In addition to convenience, filters are also used for reporting purposes, allowing you to create reporting items based on a filter’s content.

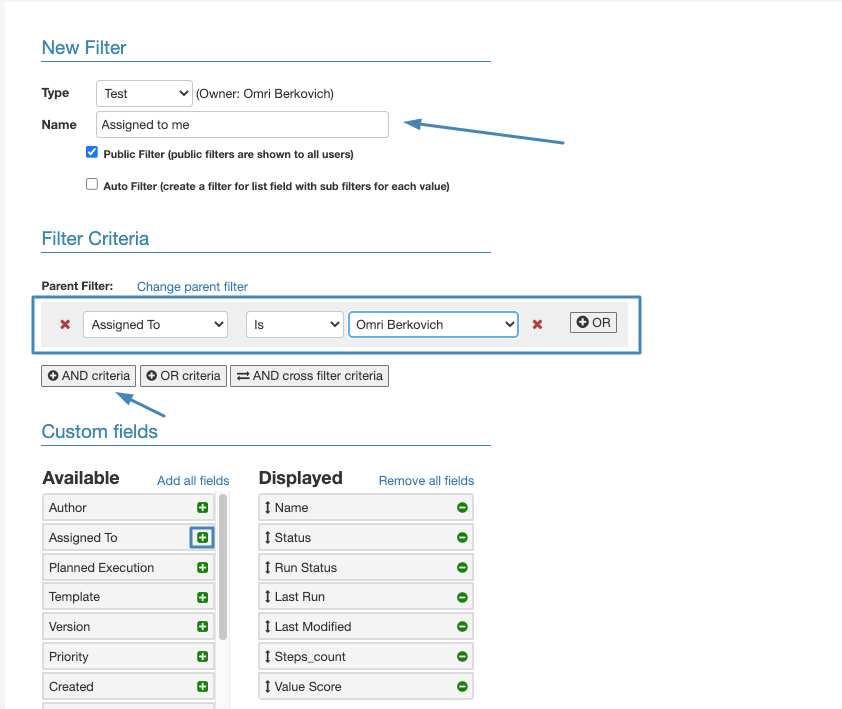

To create a new filter in one of the modules, click on ‘+New Filter’ from the left side of the screen.

After adding a name for your filter, add an ‘AND’ Query from the filter criteria section, and select the field you want to filter by from the dropdown menu.

At the bottom of the screen, drag the fields you want to show on the grid from ‘Available’ to ‘Displayed’.

There are a lot more options when it comes to filters. If you want to get a deeper look into the subject visit this guide.