What is UAT (User Acceptance Testing)?

Acceptance testing can take many forms, such as user acceptance testing, operational acceptance testing, contract acceptance testing and others. In this article, the focus is on user acceptance testing.

When people ask, “What is UAT testing?” One commonly cited definition of user acceptance testing is:

“Formal testing with respect to user needs, requirements, and business processes conducted to determine whether or not a system satisfies the acceptance criteria and to enable the user, customers or other authorized entity to determine whether or not to accept the system.” (ISTQB Glossary V3.1)

There are other definitions as well, which highlight the need of understanding the context of user acceptance testing.

For example, in agile methods “acceptance testing” is defined as:

“An acceptance test is a formal description of the behavior of a software product, generally expressed as an example or a usage scenario…..Teams mature in their practice of agile use acceptance tests as the main form of functional specification and the only formal expression of business requirements. Other teams use acceptance tests as a complement to specification documents containing uses cases or more narrative text.” (Agile Alliance)

In addition, the Agile Alliance also adds:

“The terms “functional test”, “acceptance test” and “customer test” are used more or less interchangeably. A more specific term “story test”, referring to user stories is also used, as in the phrase “story test driven development”.

From these differences, we can see that traditional acceptance testing is seen in a validation context while agile methods tend to view acceptance testing as verification. In the agile context, acceptance criteria are basically described along with a user story to show specific cases that should be tested to show the user story has been implemented correctly and are basically the same as functional tests.

I often say that user acceptance testing is one of the most valuable levels of testing, but often performed at the worst possible time. That is because if process gaps or other major flaws are discovered in UAT testing, there is little time to fix them before release. Also, the options available to fix late-stage defects may be very limited at the end of a project.

Table Of Contents

- Verification vs. Validation

- Customer vs. Producer

- How UAT Breaks Some of the “Rules” of Testing

- “Testing in the Large” vs. “Testing in the Small”

- High Level UAT Planning

- Detailed UAT Planning

- What is a Test Scenario

- User Acceptance Test Examples

- The Technical Stuff – UAT Test Environments and UAT Testing Tools

- Conclusion

Verification vs. Validation

To truly understand acceptance testing, one must also understand verification and validation.

Verification is “Confirmation by examination and through provision of objective evidence that specified requirements have been fulfilled.” (ISO 9000) Verification is based on specified requirements, such as user requirements. In other words, the question being answered is, “Did we build the system right?”

But, what happens when the requirements are missing or incorrect?

Think about this. Over 50% of software defects can be traced back to requirements-based problems. How often do you get a really well-written set of requirements? Probably, it is less than 100% of the time.

The idea of validation came about many years ago as a way to deal with gaps in specifications.

Validation is “Confirmation by examination and through provision of objective evidence that the requirements for a specific intended use or application have been fulfilled.” (ISO 9000)

It is possible to completely fulfill one or more specified requirements while missing the intended real-world application need.

Acceptance testing is validation. System testing is verification.

Unfortunately, people get these important forms of testing confused due to a wider industry misunderstanding of verification and validation.

Let’s look at another perspective.

Customer vs. Producer

In software, two parties are involved – the producer of the software (developers, testers, support team, and others), and the customers of the software (sponsors, users, developers, testers, etc.).

The perspective taken in testing will depend on which side of the customer/producer equation you fall upon. If you are the customer, you want to focus on acceptance testing to ensure the right system has been purchased and delivered (validation). If you are on the producer side, you want to make sure you are delivering the right system, built the right way (verification). It should be noted that the producer and customer might be in the same organization, or in different ones.

“Testing in the Large” vs. “Testing in the Small”

This difference involves the testing done at a system level (large) as opposed to testing done at a detailed functional level (small).

Many times, UAT testing occurs in the large because the objective is to accept a system or application. It is at the system level that challenges due to size and complexity become a risk. The approach described in this article deal with testing in the large.

However, there are times when UAT may be needed to accept small changes. In those situations, acceptance testing is smaller, faster and easier. This is sometimes the context of agile acceptance testing.

How UAT Breaks Some of the “Rules” of Testing

One reason that people fail to get the best value from user acceptance testing is because they try to apply the same rules to UAT as they would to other forms of testing, such as system testing. Here are some ways that UAT typically differs from other levels of testing.

-

In UAT, your main concern is not finding defects.

Instead, your main focus should be that the software, system or application is fit for use in a specific user context. You may find defects while performing that process, but if you focus on only trying to find defects, you will likely miss the larger goal of making sure the system can support user needs in the real world.

-

The basis of UAT is not written requirements.

Rather, it is real-world user or business scenarios as well as user acceptance criteria. This is the essence of validation. The risk is, that if you base acceptance tests on defined user requirements, you may pass the tests, but fail to find where the system fails to support real-world needs.

This means that the tests you design for UAT should look like business processes, and user scenarios instead of individual functional test cases.

User acceptance criteria are those points that are ideally defined very early – even before a project is initiated. In the case of contracted software, user acceptance criteria should be part of the contract. Contractual acceptance testing is based on acceptance criteria or other items specified in a contract.

-

UAT is typically best performed manually.

This is because acceptance testing requires visual evaluation of test results. This evaluation can entail more than just “pass” and “fail” determination. For example, while performing a UAT test scenario, it may become obvious that the software is difficult to use, or lack some other characteristic such as reliability, performance, or accessibility.

Another reason UAT lends itself toward manual testing is that there is often little return on investment with test automation. Most acceptance tests are done once toward the end of a project with little repetition of tests. There may be some exceptions to this, such as certain simple tests that can be easily automated as regression tests. Such tests may have been automated and performed earlier in a project.

-

UAT is typically performed once on a major project.

Because of this, careful analysis is needed at the test strategy level to decide how much effort in test design will be wise. If the tests can be reused later (such as in regression testing), or if there is high risk, then it makes sense to invest the time and effort to design tests. However, if the need for defined tests is not justified, then a UAT checklist based on acceptance criteria may be adequate.

-

Users should play a major role in UAT test design and testing.

Not everyone agrees on the role of users in UAT. I like to involve users as much as possible because:

- They understand real-world conditions and know what won't work in actual usage.

- They will have to use the system sooner or later anyway. This is a great opportunity for them to get a deep dive in how to use the system, even better than training in many cases.

High Level UAT Planning

As with any level or phase of testing, good test planning, analysis and design play a key role in communicating what should be tested and how testing should be performed. UAT is no different.

It is common to have a master test plan that describes the testing needed for a major project. The master test plan may reference other test plans such as a system test plan and a UAT plan. The important thing to note is that the test plans for various levels have different major objectives.

For example, a UAT plan may describe things found in other test plans, such as schedules, roles, risks, environments and tools. But a UAT test plan is often oriented to business or user test objectives as opposed to system test objectives. This is a nuance often missed in planning UAT efforts.

For UAT planning, it is helpful to have a UAT Test Plan Template for consistency and guidance. This UAT template should contain a description of what is needed for planning the UAT effort. A user acceptance testing checklist is also helpful to ensure all the needed tasks have been performed in UAT planning, UAT design and UAT execution.

A UAT plan may outline tests to be performed such as:

- “Validate that users can add customers correctly as documented in business processes”

- “Validate that users can create custom customer reports as needed to support marketing needs”

User Acceptance Test Plan Example:

UAT Checklist:

Detailed UAT Planning

Perhaps in no other place is the difference in UAT seen as in how detailed tests are planned.

In other levels of testing, tests can be described in snapshot, “cause/effect” formats such as in standalone test cases. This is because tests are needed to verify detailed functionality.

When taking the business process perspective, such as in UAT, scenario-driven tests are needed. However, designing these tests can be a challenge because it is often necessary to weave three things together: process and data over a period of time.

What is a Test Scenario?

A test scenario is a described set of test procedures or test scripts that are performed in a specific sequence to accomplish a major functional process.

Some important things to note about test scenarios:

- They are a representation of a workflow that often extends beyond just one person performing a task. For example, a UAT test script for performing a task might have the objective of testing how a user can add a customer to a database. A test scenario, on the other hand, would describe how a user would add a customer to a database, create an order for the customer, pay for the order, ship the product(s) ordered and track the shipment.

- They often extend over a period of days in actual real-life use. This is why people have difficulty in designing a user acceptance test. They lack a framework that simulates the real-world passage of time. The time may extend over hours, days, months, or even years.

-

They model the real world. When most people perform UAT, they test one function, scenario or test case at a time. However, that is not how real world processes work most of the time. In reality, there are many processes being performed all at the same time by different people. This requires multiple test scenarios being performed concurrently to simulate this activity.

-

Test scenarios are based on workflow processes that can be comprised of UAT test scripts and UAT test cases. So, while test scripts and test cases alone may fail to simulate actual practice in performing workflows, they are the building blocks for workflow-based test scenarios.

The easiest way to define a test scenario is to model a business process, then determine which test cases or procedures are needed to define the conditions to be tested. While stated at the outset it is good to avoid requirements-based tests, I have found use cases (if defined well) to be a good basis for test scenarios, realizing of course, that use cases can also contain errors and gaps.

- Test scenarios require the right test data. Just having workflow description is not enough. You also need to know which people or things (entities) you need to process through the scenario. I often use the analogy of a plumbing system comprised of pipes, faucets and water. To test if the plumbing really works, you need water flowing through the pipes. Then, you can tell if there are any leaks, the amount of water pressure, any discoloration, etc. In this analogy, the pipes represent the process, the water represents the controls and faucets represent system controls.

User Acceptance Test Examples

An online retailer is launching a new mobile app to allow people to order products from their mobile devices. The company has outsourced the development of the app to a development company that specializes in that industry and type of application on mobile devices.

Testers who work for the retailer will perform user acceptance testing. The testers have defined the most common scenarios, plus alternate and exceptional scenarios. Also, various user profiles have been defined along with various types of orders.

Example test scenarios include:

- New customer orders less than $20 of products in a single order. (small order, no free shipping)

- Existing customer orders less than $20 of products in a single order. (small order, no free shipping)

- New customer orders more than $20 but less than $50 of products in a single order. (Medium order, no free shipping)

- Existing customer orders more than $20 but less than $50 of products in a single order. (Medium order, no free shipping)

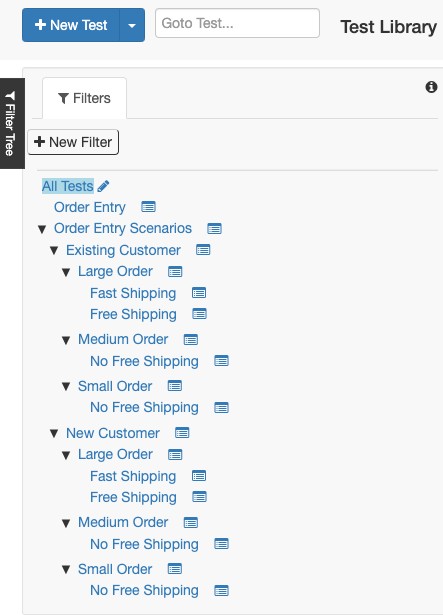

Figure 1 - Test cases and test scenarios organized in PractiTest

Things to note:

- There are multiple conditions that can be varied to achieve various test scenarios. This makes it easier to combine conditions with the effect of reducing the number of total test scenarios.

- There is a structural hierarchy, such as the decomposition of “New Customer” and “Existing Customer”.

- In PractiTest, you can reuse tests from the 'Test Library' (a repository of your tests) in different test sets. So, if you are performing the same test for different major entities such as new and existing customers, you can reuse the same test from the library.

- To test the full outcome of a scenario, it will require multiple tasks described by test scripts to be performed. The ordering of the tasks will depend on the specific objective of the scenario.

- These tasks will need to be performed over a simulated period of time. It helps to organize the test by creating a series of co-ordinated test events. I call these “test cycles”. In PractiTest these can be ordered as “Test Sets”.

- There are many conditions involved in an order entry process and it would be impossible to test all combinations. In a scenario-based approach, you have the ability to define “most likely”, “most critical”, “error prone” scenarios with the understanding that not all condition combinations will be covered. However, remember that the goal is to validate real-world usage, not to find all defects.

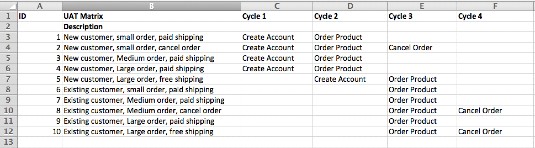

Sample UAT Test Matrix

I normally do not advocate the use of spreadsheets for test management. Tools such as PractiTest are much more efficient and effective to create and maintain test cases and test procedures. However, this table-based example (Figure 2) shows how the test cycle concept works to plan the execution of test scenarios.

Figure 2 – Conceptual View of a UAT Matrix

The Technical Stuff – UAT Test Environments and UAT Testing Tools

In new development projects, it is common for the UAT test environment to become the actual production environment. This can be a great benefit for all levels of testing, not just UAT.

In other cases where testing must occur in a safe environment on an ongoing basis, a dedicated test environment that closely resembles production configurations is needed. Test data should not be a copy of production data as it may contain private data. Plus, you don’t want test e-mails, notices, etc. going to actual addresses by accident.

UAT Testing Tools

As mentioned earlier, UAT is often a manual test if the objective is to get the users’ acceptance or evaluation. However, other tools that are helpful include test management tools, test data creation and management tools, and defect tracking tools

In some cases, test automation can be applied in UAT for regression tests, performance testing, and security testing. These are situations that require repeatability but also require significant effort to implement. If test automation is used in UAT, someone with technical knowledge in using the tool is often needed.

Conclusion

For UAT to be effective, it should be seen as validation as opposed to verification. UAT can be seen in both large and small contexts. In the large context, test scenarios are a good way to simulate real-world processes. In the smaller context, test cases and/or test procedures are a good way for users to validate functionality that is smaller in scope

PractiTest is an ideal tool to define and manage user acceptance tests, including the ability to group certain tests into test cycles to help co-ordinate their execution.